There is a conversation that happens, in various forms, in nearly every manufacturing organization I have observed over twenty-five years in this industry. It happens in budget reviews, in operational excellence steering committees, in the hallway outside a QA office, and — most damagingly — in the unexpressed assumptions that shape how an organization is actually structured and run.

The conversation goes something like this: We spend too much on compliance. If we could just get leaner — cut the forms, shrink the quality team, streamline the approvals — we would move faster, cost less, and be more competitive. Quality and compliance are the tax we pay for being in a regulated industry. They are necessary. But they are waste.

This belief is so deeply embedded in some organizations that it never even surfaces as a conversation. It is just the water they swim in. Quality exists to satisfy regulators. Lean exists to eliminate waste. Regulators require quality. Therefore, quality is irreducible waste that must be minimized subject to regulatory tolerance.

I want to argue that this framing is not merely incomplete — it is structurally wrong in a way that causes specific, traceable organizational failures. And I want to use the frameworks these organizations claim to love — lean thinking and the Theory of Constraints — to show exactly why.

The Problem With “Necessary Non-Value-Added”

Let’s start with the lean taxonomy, because the misreading begins there.

Lean thinking, as Womack and Jones articulated it in their 1996 codification of the Toyota Production System, begins with a deceptively simple question: what does the customer value? Value is defined as a capability delivered to the customer at the right time, at the right quality, at the right price — as the customer defines it, not as we do. Everything else is waste. And waste, in the lean vocabulary, comes in varieties that have been systematically catalogued as the seven forms of muda: overproduction, waiting, transport, over-processing, inventory, motion, and defects.

This taxonomy is useful. But the translation of lean from Toyota to regulated industries has consistently produced a subtle and damaging error: the misclassification of compliance activity.

Standard lean frameworks distinguish three types of activities:

- Value-added (VA): transforms the product or service in a way the customer is willing to pay for, done right the first time

- Necessary non-value-added (NNVA): does not directly create value, but cannot currently be eliminated — regulatory compliance, documentation, inspections

- Pure non-value-added (NVA): contributes nothing to the customer and should be eliminated

The intent of this classification is sound. But in practice, the “necessary” in NNVA becomes heard as “tolerated.” And tolerated waste, in organizations under cost pressure, becomes something to minimize — to satisfy the regulator with the least possible resource investment. The goal shifts from building quality into the process to performing the ritual that proves quality exists.

This is compliance theater. And it is not lean. It is the opposite of lean.

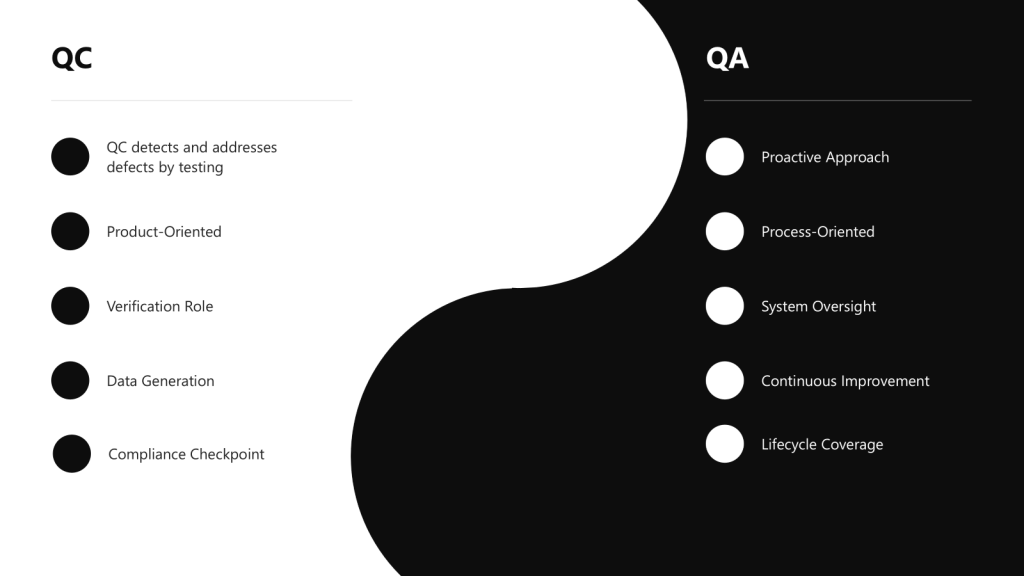

The lean enterprise insight that most organizations never reach is this: compliance activity, properly understood, is not in the NNVA category at all. When it is functioning correctly, it is in the value-added category — because patients, the ultimate customers of pharmaceutical manufacturing, explicitly require that their medicines be manufactured in a controlled, verified, and trustworthy way. Regulatory requirements are the formalized expression of what patients and society are, in fact, willing to pay for. Meeting them is not a tax on production. It is production’s purpose.

Lean Enterprise Institute’s own post-Womack thinking, which increasingly frames lean around value creation rather than waste elimination, is instructive here: “Why it’s better to focus on value, not waste.” The insight is that waste-focused thinking is derivative. You identify waste by understanding value first. Organizations that never ask what quality really provides to the patient — what value their compliance system is actually creating — will inevitably misclassify it.

What the Theory of Constraints Sees

If lean thinking provides the value framework that should reframe compliance, the Theory of Constraints provides the systems lens that explains why misclassifying compliance is so operationally dangerous.

Eli Goldratt, who introduced TOC through his 1984 book The Goal, summarized his entire philosophy in a single word when challenged by an interviewer: focus. TOC’s central observation is that every system is limited in its throughput by a single constraint — the weakest link in the chain — and that improving anything other than the constraint does not improve the system. In fact, local optimization of non-constraint resources can actively harm the system by increasing WIP, creating queues at the constraint, and masking the real problem.

Goldratt’s five focusing steps are the operating framework:

- Identify the constraint — the single resource or process that limits system throughput

- Exploit the constraint — squeeze every unit of capacity from it without additional investment

- Subordinate everything else to the constraint — make all other decisions serve the constraint’s needs

- Elevate the constraint — if still limiting, invest to increase its capacity

- Repeat — never let inertia become the new constraint

The insight for quality and compliance comes from steps two and three, and it is counterintuitive.

Poor quality before the constraint wastes constraint capacity. Every defect, every rework event, every out-of-specification result that reaches the constraint forces the constraint to process something that should have been caught earlier, or to process it again. A 5% improvement in quality yield at the constraint — a modest target — can produce a 50% improvement in system profit, because the constraint governs everything downstream of it. That is not a theoretical number. That is the arithmetic of constrained systems.

Poor quality after the constraint is equally damaging. Rework events downstream consume capacity that was produced at the constraint — the most expensive capacity in the system. A batch that fails release review, a product recall, a regulatory hold — each of these destroys throughput that originated at the constraint and cannot be recovered.

Now run this logic through a pharmaceutical manufacturing operation and ask: what happens when the quality system is treated as a cost to minimize? When the Quality Unit is under-resourced, change control is a bureaucratic hurdle rather than a knowledge management tool, CAPA is reactive rather than preventive, and environmental monitoring produces aspirational data rather than representative data?

What happens is that the quality system stops protecting the constraint. Instead of catching defects early and cheaply, it catches them late and expensively — or not at all, until a regulator finds them. The cost of poor quality does not disappear when you reduce the quality function. It defers and compounds. Most manufacturing quality experts agree that the cost of a defect increases tenfold at each major processing point — and by a factor of one hundred if the defective product reaches distribution. The invisible ledger is always open. You are either paying now, in quality investment, or you are accruing a much larger liability for later.

Compliance as Variation Reduction — The Real Alignment

There is a deeper argument to be made here, one that goes beyond the accounting of defect costs.

Lean and compliance share a root cause.

Lean compliance theory, drawing on cybernetic systems thinking and promise theory, articulates it cleanly: waste is the manifestation of risk that has become reality. The root cause of both waste and risk is uncertainty — what lean practitioners call variation or variability. The act of regulation — through feedback and feedforward controls — reduces that variation. This is the fundamental principle underlying both Lean Six Sigma in operations and compliance functions like quality management and safety programs. Both regulate processes to reduce uncertainty. Both create the stable, predictable conditions that enable efficient production.

Think about what pharmaceutical GMP actually requires, stripped of its bureaucratic expression. It requires that processes be defined, controlled, verified, and improved. It requires that deviations be investigated and root causes addressed. It requires that changes be evaluated for their effect on quality before implementation. It requires that data be accurate, complete, and contemporaneous. These are not arbitrary regulatory preferences. They are the description of a system that has low variation, high predictability, and consequently high throughput.

In Womack and Jones’s framework, the third principle of lean thinking is flow — removing the obstacles that cause work to stop, wait, batch, and pile up. A quality system that works correctly is flow. It prevents the batch failures, the contamination events, the regulatory holds, the supply disruptions that break flow catastrophically. The lean practitioner who sees GMP documentation as an interruption to flow has misread both lean and GMP.

The 3Ms of waste in lean thinking — muda (waste), mura (unevenness), and muri (overburden) — are illuminating here. An underpowered, compliance-theater quality system does not eliminate any of these. It creates all three:

- Muda in the form of failed batches, investigations, reprocessing, rework, and recalls — the most expensive forms of waste in pharmaceutical manufacturing

- Mura in the form of uneven production flow punctuated by deviations, regulatory actions, and supply disruptions — exactly the opposite of what lean seeks to achieve

- Muri in the form of overburden on operators and quality staff who are simultaneously trying to run a manufacturing operation and manage the fallout from a quality system that was never built to actually prevent problems

A compliance system that is properly resourced, well-designed, and genuinely embedded in operations reduces muda, mura, and muri. That is the lean outcome. The path to lean pharmaceutical manufacturing runs through quality, not around it.

The Failure Modes: Where Organizations Actually Go Wrong

Having established the theoretical case, let me be direct about what the failure modes actually look like. They are not hypothetical. They are documented, expensive, and recurring.

The Cost-Cutting Misapplication of Lean

The most visible example in recent history is Boeing’s 737 MAX program.

Boeing was once a genuine lean practitioner — an organization that had absorbed Toyota’s thinking deeply enough to produce an extraordinary engineering track record. What happened in the 737 MAX era was not lean. It was what lean practitioners have called L.A.M.E. — Lean As Misguidedly Executed. Leadership used the language and tools of lean to justify cost-cutting and schedule compression, while systematically stripping out the quality oversight that lean actually depends on.

Suppliers were pressured to cut costs by 15% under “Partnering for Success” programs. Engineers and quality specialists were eliminated. The FAA’s oversight authority was progressively delegated back to Boeing’s own employees. And when the 737 MAX-9 door plug blew out during an Alaska Airlines flight at 16,000 feet, a subsequent FAA audit found Boeing had failed 33 of 89 quality control standards.

The 737 MAX grounding alone cost over $20 billion in direct expenses, compensation, and legal settlements. Boeing’s market share in commercial aviation declined as Airbus surpassed them in orders and deliveries. Ongoing quality issues caused delivery halts and revenue losses. The cost of eliminating “unnecessary” quality oversight turned out to be far larger than the overhead that was eliminated.

The lean post-mortem is unambiguous: “Boeing executives failed to lead, waved off lean.” The failure was not that lean was applied — it was that the actual principles of lean were abandoned in favor of their most superficial interpretation (cut costs, move faster) while their substance (build quality in, respect people, create stable flow) was ignored. As one analysis put it plainly: “Lean isn’t about cost-cutting — it’s about flow, quality, and customer value. When Lean is used as a blunt instrument for savings, it destroys the very efficiencies it’s meant to create.”

The Compliance Theater Misapplication

If Boeing represents lean misapplied to destroy quality, Ranbaxy represents the complementary failure: a compliance system that was performed rather than practiced.

Ranbaxy Laboratories’ case is now a case study in pharmaceutical regulatory enforcement. In 2013, Ranbaxy USA pleaded guilty to felony charges and agreed to pay $500 million to resolve charges relating to the manufacture and distribution of adulterated drugs. The specific violations tell the story precisely: stability testing conducted weeks or months after the dates reported to the FDA; stability tests run on the same day rather than at prescribed intervals months apart; samples stored in conditions that did not meet specifications without disclosure. Batch records from all manufacturing sites were found deficient.

What happened at Ranbaxy was not a series of individual compliance lapses. It was a quality system that existed primarily as documentation — as evidence for regulators — rather than as a genuine operational control. The effort spent on making things look compliant vastly exceeded the effort spent on being compliant. That is the ultimate form of compliance theater: the appearance of quality activity without its substance.

The TOC lens is revealing here. If the quality system is not actually catching defects and preventing problems, where is the constraint? In the case of a compliance-theater operation, the constraint is regulatory scrutiny itself. The organization is spending significant resources managing the appearance of compliance, managing the relationship with regulators, responding to warning letters, and paying settlements — all of which are forms of waste so catastrophic they dwarf any savings that were made by underinvesting in the quality system. The “constraint” they failed to identify was their own integrity.

Toyota Got Lost

Toyota’s own history over the last two decades is a reminder that no philosophy, however elegant, is immunity. The company that codified the Toyota Production System and became synonymous with lean excellence has also experienced very public quality and compliance crises, most notably the 2009–2011 unintended acceleration recalls and a series of subsequent safety campaigns. These episodes are not just automotive gossip; for a regulated-industry audience, they are a case study in how even a mature lean culture can drift under growth pressure, global complexity, and an erosion of problem-solving discipline.

The 2009–2011 crisis centered on reports of sudden unintended acceleration involving millions of Toyota and Lexus vehicles worldwide, triggering recalls for floor mat entrapment, “sticking” accelerator pedals, and software updates for anti-lock braking in hybrids. U.S. regulators at NHTSA and NASA ultimately found no evidence of a systemic electronic throttle defect, but they did identify concrete mechanical and design issues (pedals slow to return to idle, floor mats trapping pedals) and criticized Toyota for delayed, fragmented defect reporting and recall initiation. In parallel, plaintiffs’ experts highlighted software safety weaknesses and single‑points‑of‑failure in throttle control logic, arguing that the company’s legendary jidoka had not fully migrated into software-era hazard analysis and safety-critical code practices.

Operationally, the recall crisis broke some of the myths around Toyota’s infallibility. At its peak, Toyota recalled nearly eight million vehicles in the U.S. for unintended acceleration‑related issues, with multiple waves of actions as new failure modes and affected models were identified. Internal documents and U.S. Department of Transportation timelines show a pattern that should look uncomfortably familiar to anyone in pharma: early field signals treated as noise, hesitance to escalate to formal defect status, narrow-scope countermeasures that addressed symptoms (floor mats) while ignoring systemic design or process questions, and a compliance posture that was more defensive than transparent until the crisis forced a reset. The financial and reputational consequences were significant—billions in recall and litigation costs and a visible dent in Toyota’s carefully cultivated quality halo.

Nor did the challenges end there. In the 2010s and 2020s Toyota has continued to run substantial safety campaigns: Takata airbag inflator replacements across many models; software issues that could deactivate ABS and traction control in certain RAV4s; and repeated fuel pump recalls for stalling risk across Toyota and Lexus vehicles, including an expanded 2025 campaign to replace high‑pressure fuel pumps with improved designs at no cost to customers. In each case, the factual pattern is that defects made it into production fleets at scale, often with multi‑year lag between field emergence and comprehensive corrective action. For a lean practitioner, this is the signature of a detection and escalation system that is no longer as hypersensitive as the original Toyota plants were in the era when any worker could and would pull the andon cord, and the company would swarm the problem until it was structurally addressed.

The internal and external post‑mortems on the unintended acceleration crisis are blunt about cultural drift. Analyses from academics and management scholars describe how rapid global expansion, aggressive cost targets, and supply chain complexity strained Toyota’s traditional problem‑solving routines and engineering review cycles. The incident forced a re‑emphasis on the very principles the Toyota Way is built on—genchi genbutsu (go and see), nemawashi (consensus‑building around facts), and a preference for stopping and fixing problems at the source rather than managing around them. Toyota has since tightened defect reporting to regulators, institutionalized global quality task forces, and expanded its use of standard work and software safety analysis as active problem‑solving tools, not just documentation for compliance. The lesson for pharma is not that “even Toyota has recalls,” which is a trivial observation, but that even the originator of lean can drift into treating compliance and external reporting as transactional obligations when business pressure mounts—and that recovering from that drift requires a deliberate recommitment to treating safety and quality as constraints around which the system must be designed, not as externalities to be managed.

The Quality Unit Authority Problem

More recently, and closer to home in pharmaceutical manufacturing, the pattern of Quality Unit failures in FDA warning letters documents a systemic organizational failure that follows a recognizable logic.

In 2025, FDA issued warning letters to pharmaceutical companies in China, India, and Malaysia, each citing Quality Unit deficiencies. The Chinese firm failed to establish an adequate Quality Unit with authority to ensure compliance. The Indian firm’s Quality Unit failed to maintain data integrity — torn batch records, damaged testing chromatograms, improperly completed forms. The Malaysian facility’s Quality Unit failed to provide adequate oversight of its OTC products. FDA inspection data shows Quality Unit-related citations in 6.2% of US facilities versus 23.1% in Asian operations — reflecting not a cultural difference in rigor but a structural difference in how the Quality Unit is positioned within organizational hierarchies.

These failures have a common root. When the Quality Unit lacks authority — when it is organizationally subordinated to production, when its resistance to release decisions is treated as an obstacle rather than a protection, when its resource requests are chronically undermet — it cannot perform its function. And in TOC terms, this is precisely the problem of failing to subordinate everything to the constraint.

In a pharmaceutical manufacturing system, quality assurance of the product — the thing that makes it safe and effective for patients — is the constraint on throughput in the most important sense. Not in the sense that quality should be slow or bureaucratic. But in the sense that releasing a product that is not genuinely safe and effective is not throughput. It is waste of the most catastrophic variety. A Quality Unit with insufficient authority to slow or stop a release decision it has serious concerns about is a quality system that cannot prevent the worst outcomes.

The FDA’s position is explicit: the Quality Unit is “not just a compliance requirement, but a foundational function in pharmaceutical manufacturing.” “Deficiencies in QU oversight are interpreted not as isolated failures, but as signs of systemic weaknesses in the quality management system.”

The Overinterpretation Problem: Lean Cuts in the Right Place

I want to be careful here not to construct an argument that justifies any amount of quality overhead as value-added. That would be equally wrong, and the pharmaceutical industry has its own version of this error.

Good Manufacturing Practice regulations are designed to ensure that products are consistently produced and controlled according to defined quality standards. But it is common for organizations to overinterpret regulations, leading to unnecessary processes that inflate costs and reduce efficiency without improving quality or patient safety. This is the mirror image of the compliance-theater failure: rather than cutting quality substance while maintaining quality appearance, these organizations build elaborate quality structures that are internally consistent but not actually calibrated to risk.

This is muri — overburden. And in TOC terms, it has a specific effect: it creates the appearance that quality is the constraint when it is not. When operations staff wait weeks for change control approvals on low-risk process improvements, when validation cycles run to years for straightforward equipment qualifications, when analysts spend more time in the quality system than at the bench — the quality function has become an organizational bottleneck. Not because quality itself is a bottleneck, but because the quality system is poorly designed.

This matters because it feeds the anti-quality narrative in organizations. When operations leaders experience quality as slow, expensive, and bureaucratically burdensome, their intuition that “quality is waste” feels confirmed. The correct response is not to strip the quality system further but to redesign it — to apply lean thinking to the quality system itself, asking what activities genuinely produce the outcomes (patient safety, regulatory confidence, process knowledge) that we are trying to achieve, and eliminating the administrative overhead that has accumulated without contributing to those outcomes.

The pharmaceutical industry has a specific version of this challenge in the regulatory change environment. When manufacturing objectives are primarily targeted toward compliance requirements rather than patient expectations, you get short-sighted decision-making. The CAPA system is a canonical example: set in motion primarily after failures rather than truly preventively, applied inconsistently, and treated as an administrative obligation rather than a learning mechanism.

Right-sizing the quality system is lean work. It requires honest value stream mapping of the quality system itself — every procedure, every review cycle, every approval gate — and the willingness to ask whether each step genuinely contributes to quality outcomes or whether it has calcified into ritual. Risk-based approaches to quality management, allocating rigorous controls to high-risk activities and lighter-touch approaches to lower-risk ones, are the lean answer to GMP over-engineering. They are not a compromise with compliance. They are what compliance looks like when it is designed well.

Applying the Five Focusing Steps to Your Quality System

Let me be concrete about what it looks like to apply TOC thinking to a quality and compliance system. Not as a theoretical exercise, but as an operational analysis tool.

Step 1: Identify the Constraint

What, in your current quality system, is genuinely limiting throughput — not fake throughput (releasing batches that will later fail), but real throughput (consistently delivering products that meet patient needs and regulatory requirements)?

In some organizations, the constraint is investigation capacity. The investigation queue grows faster than it can be cleared. Deviations sit open for months. Root cause analysis is shallow because the team is perpetually in triage. Every new excursion that enters the system competes for attention with fifty that are already open. This is a true quality constraint — and it cascades. Open deviations block batch releases. Shallow root cause analysis means the same problem recurs. The organization is perpetually fighting fires it never fully extinguishes.

In others, the constraint is change control. Every process improvement, every equipment modification, every procedure update must pass through a change control process that is under-resourced, under-authority, and systematically slow. The result is operational stagnation — the organization cannot improve because the mechanism for capturing and implementing improvements is clogged.

In still others, the constraint is not quality function capacity at all, but quality culture. Operations staff that do not understand why quality controls exist — or that have learned to perform around them rather than with them — create a perpetual stream of deviations, documentation errors, and control failures that consume quality function capacity and prevent any sustainable improvement.

Identifying the real constraint requires honest data. Not the data in your quality system dashboard (which measures what you already decided to measure), but the data you get from spending time in the system: how long does a CAPA stay open? What fraction of investigations reach a root cause that is actually predictive — specific enough that preventing the cause would prevent the recurrence? Where do change requests die in the queue?

Step 2: Exploit the Constraint

Before investing in more resources, what can be done to use the existing constraint capacity more effectively?

For an investigation-constrained quality system, this might mean risk-stratifying deviations more aggressively so that the team’s best analytical capacity is reserved for high-impact events rather than being consumed equally by every logbook discrepancy. It might mean developing better templates and analytical frameworks so that each investigation starts from a higher baseline. It might mean training operations staff to capture more complete and accurate initial event descriptions so that investigations start with better data.

For a change control-constrained system, it might mean implementing tiered review pathways — a fast track for low-risk changes with minimal documentation burden, a standard track for moderate-risk changes, and full review only for high-risk changes that warrant it. This is not a compromise with GMP; it is a GMP-endorsed approach. ICH Q10 and FDA’s process validation guidance both explicitly support risk-based approaches to managing change.

Exploitation, in Goldratt’s sense, means getting the most out of the constraint without additional investment. In a quality context, this is about eliminating waste from quality processes — the scheduling conflicts, the approval queues, the unnecessary review loops, the redundant documentation — so that the actual analytical and judgment work gets as much of the available time as possible.

Step 3: Subordinate Everything Else to the Constraint

This is the step that most organizations skip, and it is where the most significant organizational change is required.

If investigation capacity is the constraint, then everything else in the system should be designed to protect it. Operations practices should minimize the defect rate entering the investigation queue — not to avoid scrutiny but to ensure that when investigations are required, they address genuinely significant events rather than being consumed by administrative noise. Quality management review cycles should be scheduled around the investigation queue, not around calendar convenience. Resource allocation decisions should prioritize the investigation function.

If quality culture is the constraint, then everything else must serve the culture-building effort. Training programs, visual management, how leaders respond to deviations, whether the organizational response to an excursion is blame or learning — all of these must be subordinated to the culture goal. This is not soft management theory. It is the arithmetic of constrained systems: if you cannot change the constraint, the constraint governs everything.

The organizational corollary is pointed: if quality and compliance are genuinely in the value-creating part of the system — if they are what makes throughput real rather than illusory — then everything else should subordinate to them. Production schedules, headcount decisions, capital investment priorities. Not because quality is more important as an abstract value, but because optimizing around the constraint is the only rational strategy in a constrained system.

Step 4: Elevate the Constraint

When exploitation and subordination have been exhausted and the constraint still limits throughput, it is time to invest. In a quality context, this might mean increasing investigation staffing, implementing better analytical tools, investing in training programs, or redesigning quality system architecture.

The important discipline here is sequencing. Organizations that jump immediately to “elevate” — buying an expensive quality management software system, hiring a large team, deploying complex digital tools — before exploiting and subordinating the constraint often find that the investment does not move the needle. The constraint shifts, or the new resources are consumed by the same structural inefficiencies that created the constraint in the first place.

Pharma quality and IT investments offer endless examples of this error. EQMS implementations that automate a broken process rather than fixing it. Electronic batch records deployed over fundamentally flawed process designs. Environmental monitoring platforms generating beautifully formatted reports of data that was never representative to begin with. The complexity multiplies. The actual quality outcome does not improve. Quality teams drown in documentation while missing the real signals.

Step 5: Repeat

This is where the lean and TOC frameworks converge most explicitly: perfection is not a state; it is a direction of travel. Once the current constraint is broken, the next constraint emerges. The goal is not to eliminate all constraints — that is impossible — but to keep identifying them, keep improving, and never let inertia become the new constraint.

Goldratt’s warning in Step 5 is unusually direct: do not let inertia become the constraint. This is the failure mode of organizations that solved a quality problem once and then stopped. A CAPA that addressed the root cause but was never verified for effectiveness. A validation that was robust at implementation but never updated as the process evolved. An environmental monitoring program that was representative of operations as they existed three years ago but has never been revised to reflect current facility loading or process changes.

In lean terms, this is the pursuit of perfection — Womack and Jones’s fifth principle. Not as an abstract aspiration, but as an operational discipline of continuously questioning whether current controls are still calibrated to current risk.

The Culture Behind the Framework

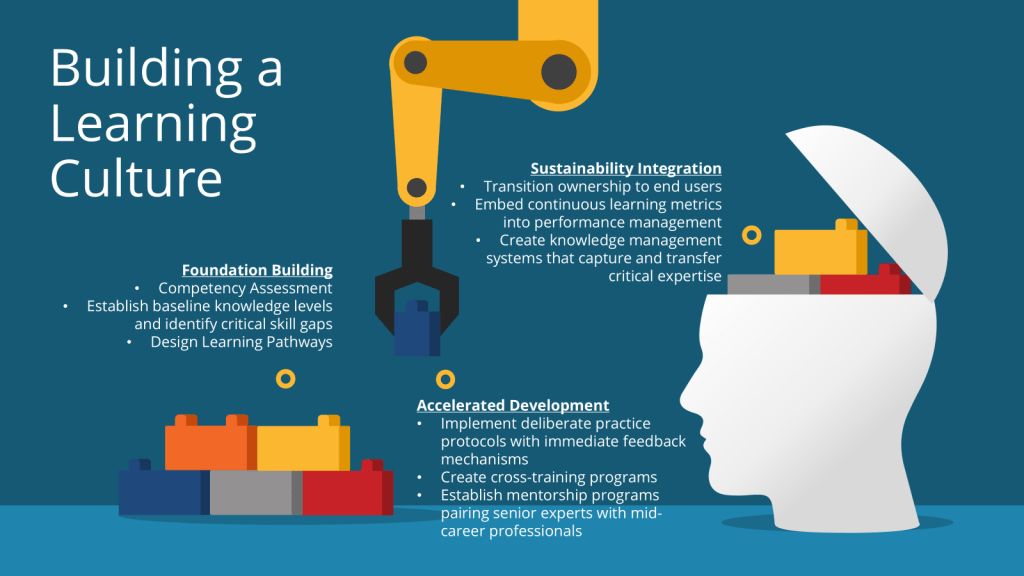

All of this — the lean principles, the TOC analysis, the five focusing steps — is intellectual scaffolding. The organizations that consistently fail at compliance are not failing because they lack frameworks. They are failing because they have the wrong culture, and culture is upstream of systems.

In the organizations where lean is misapplied to eliminate quality (Boeing), where compliance is performed rather than practiced (Ranbaxy), where the Quality Unit lacks authority to function as a genuine check on production decisions (the 2025 warning letters), there is a common cultural feature: the short term is consistently prioritized over the long term. Schedule pressure defeats quality judgment. This quarter’s cost reduction defeats next quarter’s reliability investment. The immediate discomfort of a delayed release is weighted more heavily than the long-term cost of a recall.

This is not unique to any particular industry or geography. The 70% lean implementation failure rate documented in Industry Week surveys is not primarily a problem of methodology. Kaizen Institute research identifies it clearly: 30-40% of lean success is tools; 60-70% is people. Organizations that treat lean as a toolkit to deploy — rather than a philosophy to embody — get the tools without the outcomes.

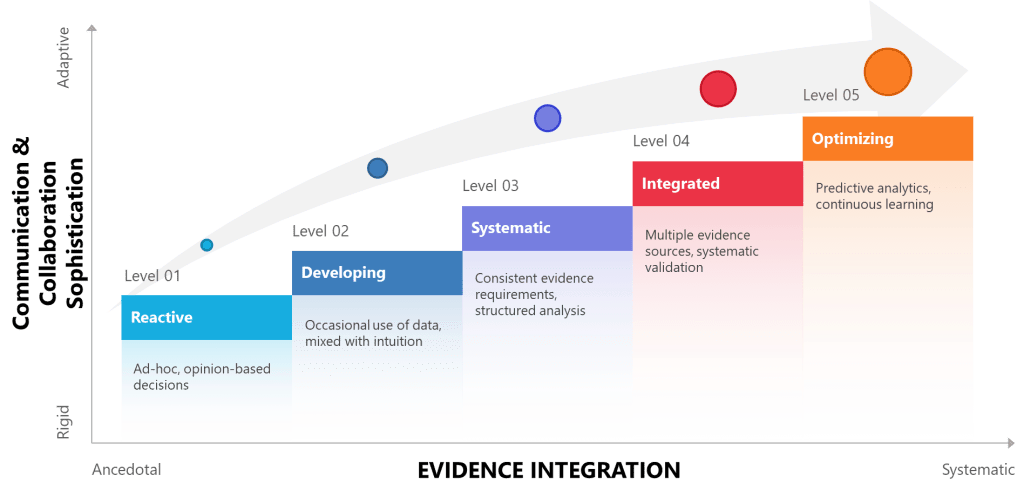

The same is true of quality culture. FDA’s analysis of pharmaceutical quality management maturity consistently identifies culture as the decisive variable: “When manufacturing objectives are targeted to meet compliance requirements rather than patient expectations, you get short-sighted decision making.” The Quality Maturity Model that FDA has been developing through its quality metrics initiative is explicitly designed to measure and encourage quality culture that goes beyond cGMP requirements — to recognize that sustainable quality performance requires an organizational identity, not just a management system.

What does quality culture look like when it is working? It looks like operations leadership that treats a quality hold as information rather than obstruction. It looks like Quality Unit staff who understand what they are protecting and why — who can articulate the patient impact of the decisions they are making. It looks like investigations that are genuinely curious rather than defensively conclusory. It looks like change control that is used as a knowledge management tool, capturing what was learned from each change rather than just documenting that it happened.

It also looks like a willingness to spend real money on quality infrastructure — not because regulators require it, but because the organization understands that quality investment is throughput investment. FDA’s own economic analysis of pharmaceutical quality management is unambiguous: poor quality management practices have caused billions of dollars in lost revenue over two decades, with annual costs of labor to manage drug shortages running from $216–359 million. The individual firm economics are equally clear: failed batches, recalls, regulatory remediation programs, consent decrees — these costs vastly exceed the investment that would have prevented them.

What to Ask of Your Own Organization

If you want to stress-test whether your organization has the right mental model of compliance and quality, there are a few questions that cut to it quickly. Treat these not as a checklist but as conversation starters — the kind of conversations that reveal whether the water you are swimming in is the right water.

On classification and value

- How does your organization describe quality in budget conversations? Is it a cost center or an investment? What evidence would change that framing?

- If you were to map your quality system activities against the lean value taxonomy — value-added, necessary non-value-added, pure waste — where would the bulk of quality work fall? How confident are you in that assessment? Who made it, and were quality professionals part of the conversation?

On the constraint

- Where does throughput (good product to patients) actually get limited in your system? Is the quality system one of those places? If so, is it limited because quality function is under-resourced, or because the quality system is poorly designed?

- What happens in your organization when a quality hold intersects with a production schedule pressure? Who wins? What are the cultural and structural forces that produce that outcome?

- Where in your quality system is the most expensive rework — the events that consume the most time, consume the most analytical capacity, generate the most re-review? Are those events being prevented, or just managed after the fact?

On waste in the quality system

- What fraction of your CAPA actions close with a genuine, specific root cause that is different from the proximate cause? What fraction close with “operator retraining” regardless of what the investigation found?

- How long does it take to change a low-risk SOP? If the answer is three months, you have a change control system that is producing muri without reducing muda. What would it take to redesign that pathway?

- Which of your GMP requirements are genuinely risk-proportionate, and which reflect accumulated regulatory overinterpretation? When was the last time your organization asked that question systematically?

On culture

- If a quality professional in your organization identifies a serious concern about a batch and recommends a hold, how does that decision get made? What is the organizational pressure on that professional? What happens to them if they are wrong?

- When deviations occur, is the first question “who is accountable?” or “what does this tell us about our system?” Both questions have their place. The sequence matters.

- Does your organization treat the cost of poor quality as a real cost — tracked, reported, and weighed against quality investment decisions? Or does the accounting system make poor quality costs invisible while quality investment costs are highly visible?

A Different Synthesis

The organizations that get this right — that build quality and compliance systems that genuinely support lean performance rather than impeding it — share a set of operational beliefs that are worth naming explicitly.

They believe that quality is not a department. It is a property of the system. The Quality Unit has a specific role, authority, and set of responsibilities. But quality outcomes are produced by the entire organization — by operations staff who understand why controls exist, by engineering teams who build quality into process design, by leadership that treats quality data as decision-relevant information rather than audit risk management.

They believe that the cost of poor quality is always larger than the cost of good quality. Not in some abstract, long-run way, but in the specific arithmetic of their own operation. They track it. They use it in investment decisions. They make it visible.

They believe that compliance is not the ceiling of performance, it is the floor. FDA’s Quality Maturity Model, the ICH Q10 pharmaceutical quality system guidance, the latest revisions of Annex 1 and the proposed Annex 15 expansion — all of these are regulatory frameworks that explicitly contemplate continuous improvement beyond minimum compliance. Organizations that reach the floor and stop moving are not lean organizations. They are organizations waiting for the next deviation.

And they believe that lean thinking applies to the quality system itself. Not as an excuse to cut quality oversight, but as a discipline of honest evaluation: which quality activities genuinely contribute to patient outcomes and regulatory confidence, and which have accumulated as ritual? The right answer is not “all quality activity is valuable.” The right answer requires ongoing, rigorous inquiry.

The Conclusion That Is Not a Conclusion

I have been careful throughout this piece not to argue that compliance is easy, or that the regulatory burden on pharmaceutical manufacturing is always perfectly calibrated, or that every FDA requirement reflects ideal risk management. These are complicated, contentious questions that deserve their own treatment.

What I have argued is narrower and, I think, more robust: the belief that compliance and quality are categories of waste — necessary wastes, tolerated costs — is structurally wrong when examined through the frameworks that organizations claim to use. Lean thinking, correctly applied, classifies quality as value-creating when the customer (the patient) genuinely requires it. The Theory of Constraints shows that quality failures destroy constraint capacity and that protecting the constraint requires, not optional, quality investment. The 3Ms of waste — muda, mura, muri — are produced by quality underinvestment, not by quality itself.

The organizations that have learned this the hardest way — Boeing through $20 billion in direct losses and two crashes, Ranbaxy through $500 million in fines and permanent reputational damage, dozens of pharmaceutical manufacturers through consent decrees and import alerts — did not fail because they over-invested in quality. They failed because they convinced themselves, using superficial applications of lean thinking, that quality was the waste to be minimized.

The frameworks were not wrong. The reading was.

The useful question is not “how little can we spend on compliance?” The useful question is “what does a quality system look like that genuinely creates value — that prevents the defects, controls the variation, captures the knowledge, and enables the throughput that makes patient outcomes and organizational sustainability possible simultaneously?”

That question is harder to answer. It requires real analysis, real investment, and a cultural commitment to treating quality outcomes as the measure of success rather than compliance checkboxes as the proxy for it.

But it is the only question that the lean tradition and the Theory of Constraints, correctly read, actually ask.