At the MHRA Blog, a GDP Inspector has posted some thoughts on Data Integrity. As always, it is valuable to read what an agency, or a representative, of an agency in this case, is thinking.

The post starts with a very good point, that I think needs to be continually reiterated. Data Integrity is not new, it is just an evolution of the best practices.

It is good to see a focus on data integrity from this perspective. Too often we see a focus on the GCP and GMP side, so bringing distribution into the discussion should remind everyone that:

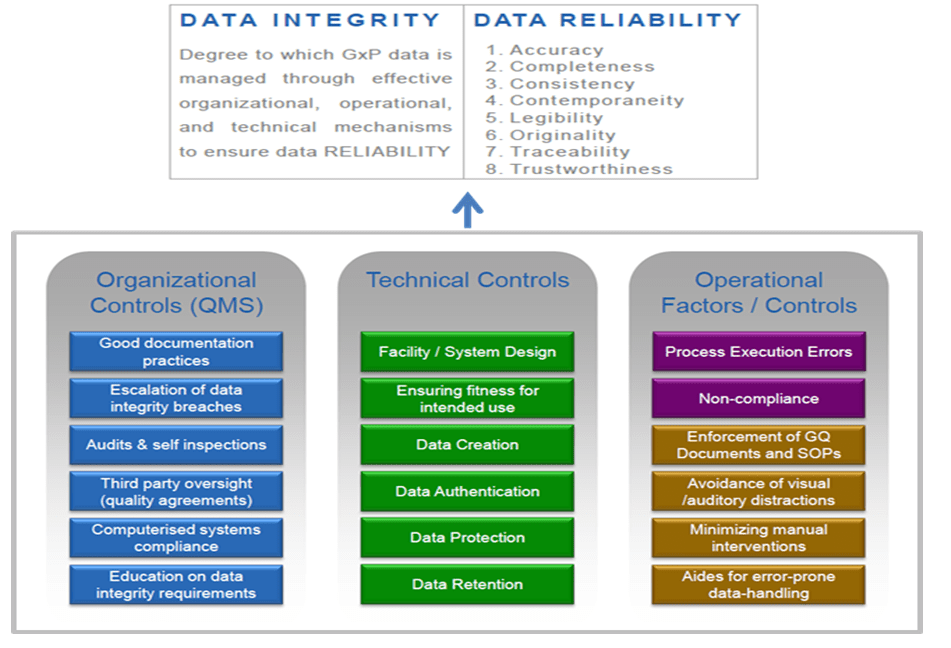

- Data Integrity oversight and governance is inclusive of;

- All aspects of the product lifecycle

- All aspects of the GxP regulated data lifecycle, which begins at the time of creation to the point of use and extends throughout its storage (retention), archival, retrieval, and eventual disposal.

Posts like this should also remind folks that data integrity is still an evolving topic, and we should expect more guidance from the agencies from this in the near future. Make sure you are keeping data integrity in your sites and have a process in place to evaluate and improve.

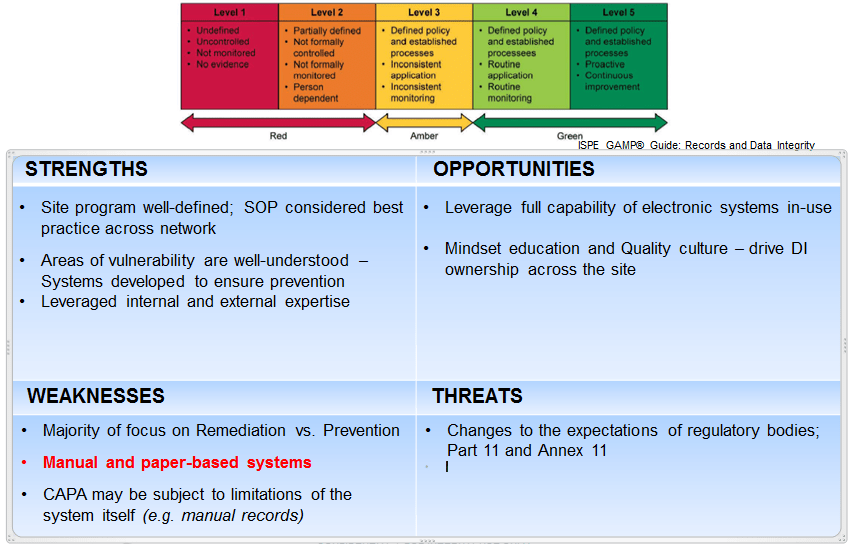

I recommend starting at the beginning, analyzing the health of your current program and doing a SWOT.