Gilbert’s Behavior Engineering Model (BEM) presents a concise way to consider both the environmental and the individual influences on a person’s behavior. The model suggests that a person’s environment supports impact to one’s behavior through information, instrumentation, and motivation. Examples include feedback, tools, and financial incentives (respectively), to name a few. The model also suggests that an individual’s behavior is influenced by their knowledge, capacity, and motives. Examples include training/education, physical or emotional limitations, and what drives them (respectively), to name a few. Let’s look at some further examples to better understand the variability of individual behavioral influences to see how they may negatively impact data integrity.

Kip Wolf “People: The Most Persistent Risk To Data Integrity“

Good article in Pharmaceutical Online last week. It cannot be stated enough, and it is good that folks like Kip keep saying it — to understand data integrity we need to understand behavior — what people do and say — and realize it is a means to an end. It is very easy to focus on the behaviors which are observable acts that can be seen and heard by management and auditors and other stakeholders but what is more critical is to design systems to drive the behaviors we want. To recognize that behavior and its causes are extremely valuable as the signal for improvement efforts to anticipate, prevent, catch, or recover from errors.

By realizing that error-provoking aspects of design, procedures, processes, and human nature exist throughout our organizations. And people cannot perform better than the organization supporting them.

|

Design Consideration |

Human Error Considerations |

Manage Controls |

|

Define the Scope of Work · Identify the critical steps · Consider the possible errors associated with each critical step and the likely consequences. · Ponder the "worst that could happen." · Consider the appropriate human performance tool(s) to use. · Identify other controls, contingencies, and relevant operating experience. |

When tasks are identified and prioritized, and resources are properly allocated (e.g., supervision, tools, equipment, work control, engineering support, training), human performance can flourish.

These organizational factors create a unique array of job-site conditions – a good work environment – that sets people up for success. Human error increases when expectations are not set, tasks are not clearly identified, and resources are not available to carry out the job. |

The error precursors – conditions that provoke error – are reduced. This includes things such as: · Unexpected conditions · Workarounds · Departures from the routine · Unclear standards · Need to interpret requirements

Properly managing controls is dependent on the elimination of error precursors that challenge the integrity of controls and allow human error to become consequential. |

|

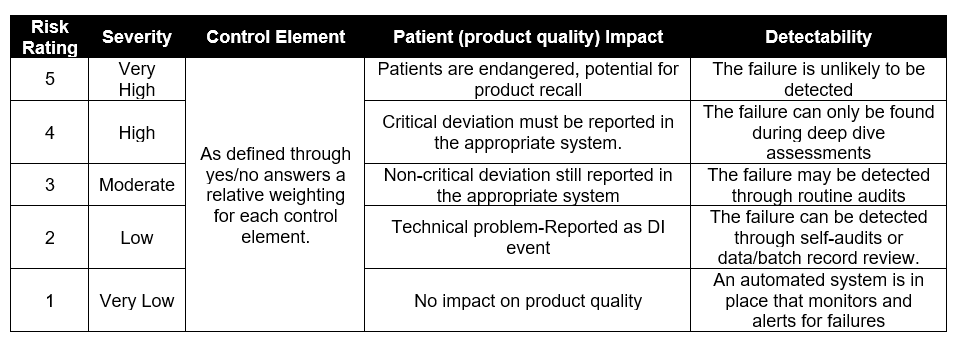

Apply proactive Risk Management |

When risk is properly analyzed we can take appropriate action to mitigate the risks. Include the criteria in risk assessments: · Adverse environmental conditions (e.g. impact of gowning, noise, temperature, etc) · Unclear roles/responsibilities · Time pressures · High workload · Confusing displays or controls |

Addressing risk through engineering and administrative controls are a cornerstone of a quality system.

Strong administrative and cultural controls can withstand human error. Controls are weakened when conditions are present that provoke error.

Eliminating error precursors in the workplace reduces the incidences of active errors. |

|

Perform Work

Utilizing error reduction tools as part of all work. Examples include: · Self-checking o Questioning attitude o Stop when unsure o Effective communication o Procedure use and adherence o Peer-checking o Second-person verifications o Turnovers

Engineering Controls can often take the place of some of these, for example second-person verifications can be replaced by automation. |

Appropriate process and tools in place to ensure that the organizational processes and values are in place to adequately support performance. |

Because people err and make mistakes, it is all the more important that controls are implemented and properly maintained. |

|

Feedback and Improvement

Continuous improvement is critical. Topics should include: · Surprises or unexpected outcomes. · Usability and quality of work documents · Knowledge and skill shortcomings · Minor errors during the activity · Unanticipated workplace conditions · Adequacy of tools and Resources · Quality of work planning/scheduling · Adequacy of supervision |

Errors during work are inevitable. If we strive to understand and address even inconsequential acts we can strengthen controls and make future performance better. |

Vulnerabilities with controls can be found and corrected when management decides it is important enough to devote resources to the effort

The fundamental aim of oversight is to improve resilience to significant events triggered by active errors in the workplace—that is, to minimize the severity of events.

Oversight controls provide opportunities to see what is happening, to identify specific vulnerabilities or performance gaps, to take action to address those vulnerabilities and performance gaps, and to verify that they have been resolved. |