On the morning of his thirtieth birthday, Josef K. is arrested. He doesn’t know what crime he’s accused of committing. The arresting officers can’t tell him. His neighbors assure him the authorities must have good reasons, though they don’t know what those reasons are. When he seeks answers, he’s directed to a court that meets in tenement attics, staffed by officials whose actions are never explained but always assumed to be justified. The bureaucracy processing his case is described as “flawless,” yet K. later witnesses a servant destroying paperwork because he can’t determine who the recipient should be.

Franz Kafka wrote The Trial in 1914, but he could have been describing a pharmaceutical deviation investigation in 2026.

Consider: A batch is placed on hold. The deviation report cites “failure to follow approved procedure.” Investigators interview operators, review batch records, and examine environmental monitoring data. The investigation concludes that training was inadequate, procedures were unclear, and the change control process should have flagged this risk. Corrective actions are assigned: retraining all operators, revising the SOP, and implementing a new review checkpoint in change control. The CAPA effectiveness check, conducted six months later, confirms that all actions have been completed. The quality system has functioned flawlessly.

Yet if you ask the operator what actually happened—what really happened, in the moment when the deviation occurred—you get a different story. The procedure said to verify equipment settings before starting, but the equipment interface doesn’t display the parameters the SOP references. It hasn’t for the past three software updates. So operators developed a workaround: check the parameters through a different screen, document in the batch record that verification occurred, and continue. Everyone knows this. Supervisors know it. The quality oversight person stationed on the manufacturing floor knows it. It’s been working fine for months.

Until this batch, when the workaround didn’t work, and suddenly everyone had to pretend they didn’t know about the workaround that everyone knew about.

This is what I call the Kafkaesque quality system. Not because it’s absurd—though it often is. But because it exhibits the same structural features Kafka identified in bureaucratic systems: officials whose actions are never explained, contradictory rationalizations praised as features rather than bugs, the claim of flawlessness maintained even as paperwork literally gets destroyed because nobody knows what to do with it, and above all, the systemic production of gaps between how things are supposed to work and how they actually work—gaps that everyone must pretend don’t exist.

Pharmaceutical quality systems are not designed to be Kafkaesque. They’re designed to ensure that medicines are safe, effective, and consistently manufactured to specification. They emerge from legitimate regulatory requirements grounded in decades of experience about what can go wrong when quality oversight is inadequate. ICH Q10, the FDA’s Quality Systems Guidance, EU GMP—these frameworks represent hard-won knowledge about the critical control points that prevent contamination, mix-ups, degradation, and the thousand other ways pharmaceutical manufacturing can fail.

But somewhere between the legitimate need for control and the actual functioning of quality systems, something goes wrong. The system designed to ensure quality becomes a system designed to ensure compliance. The compliance designed to demonstrate quality becomes compliance designed to satisfy inspections. The investigations designed to understand problems become investigations designed to document that all required investigation steps were completed. And gradually, imperceptibly, we build the Castle—an elaborate bureaucracy that everyone assumes is functioning properly, that generates enormous amounts of documentation proving it functions properly, and that may or may not actually be ensuring the quality it was built to ensure.

Legibility and Control

Regulatory authorities, corporate management, and any entity trying to govern complex systems—need legibility. They need to be able to “read” what’s happening in the systems they regulate. For pharmaceutical regulators, this means being able to understand, from batch records and validation documentation and investigation reports, whether a manufacturer is consistently producing medicines of acceptable quality.

Legibility requires simplification. The actual complexity of pharmaceutical manufacturing—with its tacit knowledge, operator expertise, equipment quirks, material variability, and environmental influences—cannot be fully captured in documents. So we create simplified representations. Batch records that reduce manufacturing to a series of checkboxes. Validation protocols that demonstrate method performance under controlled conditions. Investigation reports that fit problems into categories like “inadequate training” or “equipment malfunction”.

This simplification serves a legitimate purpose. Without it, regulatory oversight would be impossible. How could an inspector evaluate whether a manufacturer maintains adequate control if they had to understand every nuance of every process, every piece of tacit knowledge held by every operator, every local adaptation that makes the documented procedures actually work?

But we can often mistake the simplified, legible representation for the reality it represents. We fall prey to the fallacy that if we can fully document a system, we can fully control it. If we specify every step in SOPs, operators will perform those steps. If we validate analytical methods, those methods will continue performing as validated. If we investigate deviations and implement CAPAs, similar deviations won’t recur.

The assumption is seductive because it’s partly true. Documentation does facilitate control. Validation does improve analytical reliability. CAPA does prevent recurrence—sometimes. But the simplified, legible version of pharmaceutical manufacturing is always a reduction of the actual complexity. And our quality systems can forget that the map is not the territory.

What happens when the gap between the legible representation and the actual reality grows too large? Our Pharmaceutical quality systems fail quietly, in the gap between work-as-imagined and work-as-done. In procedures that nobody can actually follow. In validated methods that don’t work under routine conditions. In investigations that document everything except what actually happened. In quality metrics that measure compliance with quality processes rather than actual product quality.

Metis: The Knowledge Bureaucracies Cannot See

We can contrast this formal, systematic, documented knowledge with metis: practical wisdom gained through experience, local knowledge that adapts to specific contexts, the know-how that cannot be fully codified.

Greek mythology personified metis as cunning intelligence, adaptive resourcefulness, the ability to navigate complex situations where formal rules don’t apply. Scott uses the term to describe the local, practical knowledge that makes complex systems actually work despite their formal structures.

In pharmaceutical manufacturing, metis is the operator who knows that the tablet press runs better when you start it up slowly, even though the SOP doesn’t mention this. It’s the analytical chemist who can tell from the peak shape that something’s wrong with the HPLC column before it fails system suitability. It’s the quality reviewer who recognizes patterns in deviations that indicate an underlying equipment issue nobody has formally identified yet.

This knowledge is typically tacit—difficult to articulate, learned through experience rather than training, tied to specific contexts. Studies suggest tacit knowledge comprises 90% of organizational knowledge, yet it’s rarely documented because it can’t easily be reduced to procedural steps. When operators leave or transfer, their metis goes with them.

High-modernist quality systems struggle with metis because they can’t see it. It doesn’t appear in batch records. It can’t be validated. It doesn’t fit into investigation templates. From the regulator’s-eye view, or the quality management’s-eye view—it’s invisible.

So we try to eliminate it. We write more detailed SOPs that specify exactly how to operate equipment, leaving no room for operator discretion. We implement lockout systems that prevent deviation from prescribed parameters. We design quality oversight that verifies operators follow procedures exactly as written.

This creates a dilemma that Sidney Dekker identifies as central to bureaucratic safety systems: the gap between work-as-imagined and work-as-done.

Work-as-imagined is how quality management, procedure writers, and regulators believe manufacturing happens. It’s documented in SOPs, taught in training, and represented in batch records. Work-as-done is what actually happens on the manufacturing floor when real operators encounter real equipment under real conditions.

In ultra-adaptive environments—which pharmaceutical manufacturing surely is, with its material variability, equipment drift, environmental factors, and human elements—work cannot be fully prescribed in advance. Operators must adapt, improvise, apply judgment. They must use metis.

But adaptation and improvisation look like “deviation from approved procedures” in a high-modernist quality system. So operators learn to document work-as-imagined in batch records while performing work-as-done on the floor. The batch record says they “verified equipment settings per SOP section 7.3.2” when what they actually did was apply the metis they’ve learned through experience to determine whether the equipment is really ready to run.

This isn’t dishonesty—or rather, it’s the kind of necessary dishonesty that bureaucratic systems force on the people operating within them. Kafka understood this. The villagers in The Castle provide contradictory explanations for the officials’ actions, and everyone praises this ambiguity as a feature of the system rather than recognizing it as a dysfunction. Everyone knows the official story and the actual story don’t match, but admitting that would undermine the entire bureaucratic structure.

Metis, Expertise, and the Architecture of Knowledge

Understanding why pharmaceutical quality systems struggle to preserve and utilize operator knowledge requires examining how knowledge actually exists and develops in organizations. Three frameworks illuminate different facets of this challenge: James C. Scott’s concept of metis, W. Edwards Deming’s System of Profound Knowledge, and the research on expertise development and knowledge management pioneered by Ikujiro Nonaka and Anders Ericsson.

These frameworks aren’t merely academic concepts. They reveal why quality systems that look comprehensive on paper fail in practice, why experienced operators leave and take critical capability with them, and why organizations keep making the same mistakes despite extensive documentation of lessons learned.

The Architecture of Knowledge: Tacit and Explicit

Management scholar Ikujiro Nonaka distinguishes between two fundamental types of knowledge that coexist in all organizations. Explicit knowledge is codifiable—it can be expressed in words, numbers, formulas, documented procedures. It’s the content of SOPs, validation protocols, batch records, training materials. It’s what we can write down and transfer through formal documentation.

Tacit knowledge is subjective, experience-based, and context-specific. It includes cognitive skills like beliefs, mental models, and intuition, as well as technical skills like craft and know-how. Tacit knowledge is notoriously difficult to articulate. When an experienced analytical chemist looks at a chromatogram and says “something’s not right with that peak shape,” they’re drawing on tacit knowledge built through years of observing normal and abnormal results.

Nonaka’s insight is that these two types of knowledge exist in continuous interaction through what he calls the SECI model—four modes of knowledge conversion that form a spiral of organizational learning:

- Socialization (tacit to tacit): Tacit knowledge transfers between individuals through shared experience and direct interaction. An operator training a new hire doesn’t just explain the procedure; they demonstrate the subtle adjustments, the feel of properly functioning equipment, the signs that something’s going wrong. This is experiential learning, the acquisition of skills and mental models through observation and practice.

- Externalization (tacit to explicit): The difficult process of making tacit knowledge explicit through articulation. This happens through dialogue, metaphor, and reflection-on-action—stepping back from practice to describe what you’re doing and why. When investigation teams interview operators about what actually happened during a deviation, they’re attempting externalization. But externalization requires psychological safety; operators won’t articulate their tacit knowledge if doing so will reveal deviations from approved procedures.

- Combination (explicit to explicit): Documented knowledge combined into new forms. This is what happens when validation teams synthesize development data, platform knowledge, and method-specific studies into validation strategies. It’s the easiest mode because it works entirely with already-codified knowledge.

- Internalization (explicit to tacit): The process of embodying explicit knowledge through practice until it becomes “sticky” individual knowledge—operational capability. When operators internalize procedures through repeated execution, they’re converting the explicit knowledge in SOPs into tacit capability. Over time, with reflection and deliberate practice, they develop expertise that goes beyond what the SOP specifies.

Metis is the tacit knowledge that resists externalization. It’s context-specific, adaptive, often non-verbal. It’s what operators know about equipment quirks, material variability, and process subtleties—knowledge gained through direct engagement with complex, variable systems.

High-modernist quality systems, in their drive for legibility and control, attempt to externalize all tacit knowledge into explicit procedures. But some knowledge fundamentally resists codification. The operator’s ability to hear when equipment isn’t running properly, the analyst’s judgment about whether a result is credible despite passing specification, the quality reviewer’s pattern recognition that connects apparently unrelated deviations—this metis cannot be fully proceduralized.

Worse, the attempt to externalize all knowledge into procedures creates what Nonaka would recognize as a broken learning spiral. Organizations that demand perfect procedural compliance prevent socialization—operators can’t openly share their tacit knowledge because it would reveal that work-as-done doesn’t match work-as-imagined. Externalization becomes impossible because articulating tacit knowledge is seen as confession of deviation. The knowledge spiral collapses, and organizations lose their capacity for learning.

Deming’s Theory of Knowledge: Prediction and Learning

W. Edwards Deming’s System of Profound Knowledge provides a complementary lens on why quality systems struggle with knowledge. One of its four interrelated elements—Theory of Knowledge—addresses how we actually learn and improve systems.

Deming’s central insight: there is no knowledge without theory. Knowledge doesn’t come from merely accumulating experience or documenting procedures. It comes from making predictions based on theory and testing whether those predictions hold. This is what makes knowledge falsifiable—it can be proven wrong through empirical observation.

Consider analytical method validation through this lens. Traditional validation documents that a method performed acceptably under specified conditions; this is a description of past events, not theory. Lifecycle validation, properly understood, makes a theoretical prediction: “This method will continue generating results of acceptable quality when operated within the defined control strategy”. That prediction can be tested through Stage 3 ongoing verification. When the prediction fails—when the method doesn’t perform as validation claimed—we gain knowledge about the gap between our theory (the validation claim) and reality.

This connects directly to metis. Operators with metis have internalized theories about how systems behave. When an experienced operator says “We need to start the tablet press slowly today because it’s cold in here and the tooling needs to warm up gradually,” they’re articulating a theory based on their tacit understanding of equipment behavior. The theory makes a prediction: starting slowly will prevent the coating defects we see when we rush on cold days.

But hierarchical, procedure-driven quality systems don’t recognize operator theories as legitimate knowledge. They demand compliance with documented procedures regardless of operator predictions about outcomes. So the operator follows the SOP, the coating defects occur, a deviation is written, and the investigation concludes that “procedure was followed correctly” without capturing the operator’s theoretical knowledge that could have prevented the problem.

Deming’s other element—Knowledge of Variation—is equally crucial. He distinguished between common cause variation (inherent to the system, management’s responsibility to address through system redesign) and special cause variation (abnormalities requiring investigation). His research across multiple industries suggested that 94% of problems are common cause—they reflect system design issues, not individual failures.

Bureaucratic quality systems systematically misattribute variation. When operators struggle to follow procedures, the system treats this as special cause (operator error, inadequate training) rather than common cause (the procedures don’t match operational reality, the system design is flawed). This misattribution prevents system improvement and destroys operator metis by treating adaptive responses as deviations.

From Deming’s perspective, metis is how operators manage system variation when procedures don’t account for the full range of conditions they encounter. Eliminating metis through rigid procedural compliance doesn’t eliminate variation—it eliminates the adaptive capacity that was compensating for system design flaws.

Ericsson and the Development of Expertise

Psychologist Anders Ericsson’s research on expertise development reveals another dimension of how knowledge works in organizations. His studies across fields from chess to music to medicine dismantled the myth that expert performers have unusual innate talents. Instead, expertise is the result of what he calls deliberate practice—individualized training activities specifically designed to improve particular aspects of performance through repetition, feedback, and successive refinement.

Deliberate practice has specific characteristics:

- It involves tasks initially outside the current realm of reliable performance but masterable within hours through focused concentration

- It requires immediate feedback on performance

- It includes reflection between practice sessions to guide subsequent improvement

- It continues for extended periods—Ericsson found it takes a minimum of ten years of full-time deliberate practice to reach high levels of expertise even in well-structured domains

Critically, experience alone does not create expertise. Studies show only a weak correlation between years of professional experience and actual performance quality. Merely repeating activities leads to automaticity and arrested development—practice makes permanent, but only deliberate practice improves performance.

This has profound implications for pharmaceutical quality systems. When we document procedures and require operators to follow them exactly, we’re eliminating the deliberate practice conditions that develop expertise. Operators execute the same steps repeatedly without feedback on the quality of performance (only on compliance with procedure), without reflection on how to improve, and without tackling progressively more challenging aspects of the work.

Worse, the compliance focus actively prevents expertise development. Ericsson emphasizes that experts continually try to improve beyond their current level of performance. But quality systems that demand perfect procedural compliance punish the very experimentation and adaptation that characterizes deliberate practice. Operators who develop metis through deliberate engagement with operational challenges must conceal that knowledge because it reveals they adapted procedures rather than following them exactly.

The expertise literature also reveals how knowledge transfers—or fails to transfer—in organizations. Research identifies multiple knowledge transfer mechanisms: social networks, organizational routines, personnel mobility, organizational design, and active search. But effective transfer depends critically on the type of knowledge involved.

Tacit knowledge transfers primarily through mentoring, coaching, and peer-to-peer interaction—what Nonaka calls socialization. When experienced operators leave, this tacit knowledge vanishes if it hasn’t been transferred through direct working relationships. No amount of documentation captures it because tacit knowledge is experience-based and context-specific.

Explicit knowledge transfers through documentation, formal training, and digital platforms. This is what quality systems are designed for: capturing knowledge in SOPs, specifications, validation protocols. But organizations often mistake documentation for knowledge transfer. Creating comprehensive procedures doesn’t ensure that people learn from them. Without internalization—the conversion of explicit knowledge back into tacit operational capability through practice and reflection—documented knowledge remains inert.

Knowledge Management Failures in Pharmaceutical Quality

These three frameworks—Nonaka’s knowledge conversion spiral, Deming’s theory of knowledge and variation, Ericsson’s deliberate practice—reveal systematic failures in how pharmaceutical quality systems handle knowledge:

- Broken socialization: Quality systems that punish deviation prevent operators from openly sharing tacit knowledge about work-as-done. New operators learn the documented procedures but not the metis that makes those procedures actually work.

- Failed externalization: Investigation processes that focus on compliance rather than understanding don’t capture operator theories about causation. The tacit knowledge that could prevent recurrence remains tacit—and often punishable if revealed.

- Meaningless combination: Organizations generate elaborate CAPA documentation by combining explicit knowledge about what should happen without incorporating tacit knowledge about what actually happens. The resulting “knowledge” doesn’t reflect operational reality.

- Superficial internalization: Training programs that emphasize procedure memorization rather than capability development don’t convert explicit knowledge into genuine operational expertise. Operators learn to document compliance without developing the metis needed for quality work.

- Misattribution of variation: Systems treat operator adaptation as special cause (individual failure) rather than recognizing it as response to common cause system design issues. This prevents learning because the organization never addresses the system flaws that necessitate adaptation.

- Prevention of deliberate practice: Rigid procedural compliance eliminates the conditions for expertise development—challenging tasks, immediate feedback on quality (not just compliance), reflection, and progressive improvement. Organizations lose expertise development capacity.

- Knowledge transfer theater: Extensive documentation of lessons learned and best practices without the mentoring relationships and communities of practice that enable actual tacit knowledge transfer. Knowledge “management” that manages documents rather than enabling organizational learning.

The consequence is what Nonaka would call organizational knowledge destruction rather than creation. Each layer of bureaucracy, each procedure demanding rigid compliance, each investigation that treats adaptation as deviation, breaks another link in the knowledge spiral. The organization becomes progressively more ignorant about its own operations even as it generates more and more documentation claiming to capture knowledge.

Building Systems That Preserve and Develop Metis

If metis is essential for quality, if expertise develops through deliberate practice, if knowledge exists in continuous interaction between tacit and explicit forms, how do we design quality systems that work with these realities rather than against them?

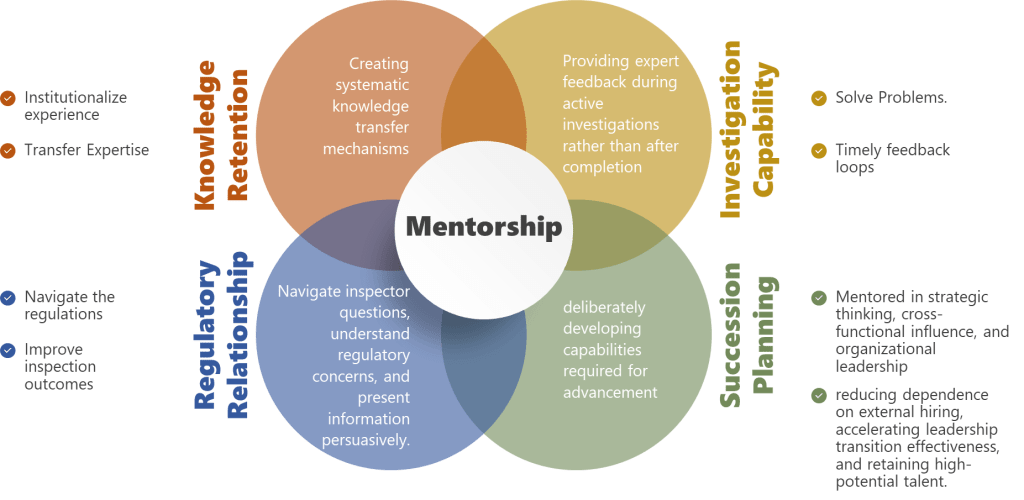

Enable genuine socialization: Create legitimate spaces for experienced operators to work directly with less experienced ones in conditions where tacit knowledge can be openly shared. This means job shadowing, mentoring relationships, and communities of practice where work-as-done can be discussed without fear of punishment for revealing that it differs from work-as-imagined.

Design for externalization: Investigation processes should aim to capture operator theories about causation, not just document procedural compliance. Use dialogue, ask operators for metaphors and analogies that help articulate tacit understanding, create reflection opportunities where people can step back from action to describe what they know. But this requires just culture—operators won’t externalize knowledge if doing so triggers blame.

Support deliberate practice: Instead of demanding perfect procedural compliance, create conditions for expertise development. This means progressively challenging work assignments, immediate feedback on quality of outcomes (not just compliance), reflection time between executions, and explicit permission to adapt within understood boundaries. Document decision rules rather than rigid procedures, so operators develop judgment rather than just following steps.

Apply Deming’s knowledge theory: Make quality system elements falsifiable by articulating explicit predictions that can be tested. Validated methods should predict ongoing performance, CAPAs should predict reduction in deviation frequency, training should predict capability improvement. Then test those predictions systematically and learn when they fail.

Correctly attribute variation: When operators struggle with procedures or adapt them, ask whether this is special cause (unusual circumstances) or common cause (system design doesn’t match operational reality). If it’s common cause—which Deming suggests is 94% of the time—management must redesign the system rather than demanding better compliance.

Build knowledge transfer mechanisms: Recognize that different knowledge types require different transfer approaches. Tacit knowledge needs mentoring and communities of practice, not just documentation. Explicit knowledge needs accessible documentation and effective training, not just comprehensive procedure libraries. Knowledge transfer is a property of organizational systems and culture, not just techniques.

Measure knowledge outcomes, not documentation volume: Success isn’t demonstrated by comprehensive procedures or extensive training records. It’s demonstrated by whether people can actually perform quality work, whether they have the tacit knowledge and expertise that come from deliberate practice and genuine organizational learning. Measure investigation quality by whether investigations capture knowledge that prevents recurrence, measure CAPA effectiveness by whether problems actually decrease, measure training effectiveness by whether capability improves.

The fundamental insight across all three frameworks is that knowledge is not documentation. Knowledge exists in the dynamic interaction between explicit and tacit forms, between theory and practice, between individual expertise and organizational capability. Quality systems designed around documentation—assuming that if we write comprehensive procedures and require people to follow them, quality will result—are systems designed in ignorance of how knowledge actually works.

Metis is not an obstacle to be eliminated through standardization. It is an essential organizational capability that develops through deliberate practice and transfers through socialization. Deming’s profound knowledge isn’t just theory—it’s the lens that reveals why bureaucratic systems systematically destroy the very knowledge they need to function effectively.

Building quality systems that preserve and develop metis means building systems for organizational learning, not organizational documentation. It means recognizing operator expertise as legitimate knowledge rather than deviation from procedures. It means creating conditions for deliberate practice rather than demanding perfect compliance. It means enabling knowledge conversion spirals rather than breaking them through blame and rigid control.

This is the escape from the Kafkaesque quality system. Not through more procedures, more documentation, more oversight—but through quality systems designed around how humans actually learn, how expertise actually develops, how knowledge actually exists in organizations.

The Pathologies of Bureaucracy

Sociologist Robert K. Merton studied how bureaucracies develop characteristic dysfunctions even when staffed by competent, well-intentioned people. He identified what he called “bureaucratic pathologies”—systematic problems that emerge from the structure of bureaucratic organizations rather than from individual failures.

The primary pathology is what Merton called “displacement of goals”. Bureaucracies establish rules and procedures as means to achieve organizational objectives. But over time, following the rules becomes an end in itself. Officials focus on “doing things by the book” rather than on whether the book is achieving its intended purpose.

Does this sound familiar to pharmaceutical quality professionals?

How many deviation investigations focus primarily on demonstrating that investigation procedures were followed—impact assessment completed, timeline met, all required signatures obtained—with less attention to whether the investigation actually understood what happened and why? How many CAPA effectiveness checks verify that corrective actions were implemented but don’t rigorously test whether they solved the underlying problem? How many validation studies are designed to satisfy validation protocol requirements rather than to genuinely establish method fitness for purpose?

Merton identified another pathology: bureaucratic officials are discouraged from showing initiative because they lack the authority to deviate from procedures. When problems arise that don’t fit prescribed categories, officials “pass the buck” to the next level of hierarchy. Meanwhile, the rigid adherence to rules and the impersonal attitude this generates are interpreted by those subject to the bureaucracy as arrogance or indifference.

Quality professionals will recognize this pattern. The quality oversight person on the manufacturing floor sees a problem but can’t address it without a deviation report. The deviation report triggers an investigation that can’t conclude without identifying root cause according to approved categories. The investigation assigns CAPA that requires multiple levels of approval before implementation. By the time the CAPA is implemented, the original problem may have been forgotten, or operators may have already developed their own workaround that will remain invisible to the formal system.

Dekker argues that bureaucratization creates “structural secrecy”—not active concealment, but systematic conditions under which information cannot flow. Bureaucratic accountability determines who owns data “up to where and from where on”. Once the quality staff member presents a deviation report to management, their bureaucratic accountability is complete. What happens to that information afterward is someone else’s problem.

Meanwhile, operators know things that quality staff don’t know, quality staff know things that management doesn’t know, and management knows things that regulators don’t know. Not because anyone is deliberately hiding information, but because the bureaucratic structure creates boundaries across which information doesn’t naturally flow.

This is structural secrecy, and it’s lethal to quality systems because quality depends on information about what’s actually happening. When the formal system cannot see work-as-done, cannot access operator metis, cannot flow information across bureaucratic boundaries, it’s managing an imaginary factory rather than the real one.

Compliance Theater: The Performance of Quality

If bureaucratic quality systems manage imaginary factories, they require imaginary proof that quality is maintained. Enter compliance theater—the systematic creation of documentation and monitoring that prioritizes visible adherence to requirements over substantive achievement of quality objectives.

Compliance theater has several characteristic features:

- Surface-level implementation: Organizations develop extensive documentation, training programs, and monitoring systems that create the appearance of comprehensive quality control while lacking the depth necessary to actually ensure quality.

- Metrics gaming: Success is measured through easily manipulable indicators—training completion rates, deviation closure timeliness, CAPA on-time implementation—rather than outcomes reflecting actual quality performance.

- Resource misallocation: Significant resources devoted to compliance performance rather than substantive quality improvement, creating opportunity costs that impede genuine progress.

- Temporal patterns: Activity spikes before inspections or audits rather than continuous vigilance.

Consider CAPA effectiveness checks. In principle, these verify that corrective actions actually solved the underlying problem. But how many CAPA effectiveness checks truly test this? The typical approach: verify that the planned actions were implemented (revised SOP distributed, training completed, new equipment qualified), wait for some period during which no similar deviation occurs, declare the CAPA effective.

This is ritualistic compliance, not genuine verification. If the deviation was caused by operator metis being inadequate for the actual demands of the task, and the corrective action was “revise SOP to clarify requirements and retrain operators,” the effectiveness check should test whether operators now have the knowledge and capability to handle the task. But we don’t typically test capability. We verify that training attendance was documented and that no deviations of the exact same type have been reported in the past six months.

No deviations reported is not the same as no deviations occurring. It might mean operators developed better workarounds that don’t trigger quality system alerts. It might mean supervisors are managing issues informally rather than generating deviation reports. It might mean we got lucky.

But the paperwork says “CAPA verified effective,” and the compliance theater continues.

Analytical method validation presents another arena for compliance theater. Traditional validation treats validation as an event: conduct studies demonstrating acceptable performance, generate a validation report, file with regulatory authorities, and consider the method “validated”. The implicit assumption is that a method that passed validation will continue performing acceptably forever, as long as we check system suitability.

But methods validated under controlled conditions with expert analysts and fresh materials often perform differently under routine conditions with typical analysts and aged reagents. The validation represented work-as-imagined. What happens during routine testing is work-as-done.

If we took lifecycle validation seriously, we would treat validation as predicting future performance and continuously test those predictions through Stage 3 ongoing verification. We would monitor not just system suitability pass/fail but trends suggesting performance drift. We would investigate anomalous results as potential signals of method inadequacy.

But Stage 3 verification is underdeveloped in regulatory guidance and practice. So validated methods continue being used until they fail spectacularly, at which point we investigate the failure, implement CAPA, revalidate, and resume the cycle.

The validation documentation proves the method is validated. Whether the method actually works is a separate question.

The Bureaucratic Trap: How Good Systems Go Bad

I need to emphasize: pharmaceutical quality systems did not become bureaucratic because quality professionals are incompetent or indifferent. The bureaucratization happens through the interaction of legitimate pressures that push systems toward forms that are legible, auditable, and defensible but increasingly disconnected from the complex reality they’re meant to govern.

- Regulatory pressure: Inspectors need evidence that quality is controlled. The most auditable evidence is documentation showing compliance with established procedures. Over time, quality systems optimize for auditability rather than effectiveness.

- Liability pressure: When quality failures occur, organizations face regulatory action, litigation, and reputational damage. The best defense is demonstrating that all required procedures were followed. This incentivizes comprehensive documentation even when that documentation doesn’t enhance actual quality.

- Complexity: Pharmaceutical manufacturing is genuinely complex, with thousands of variables affecting product quality. Reducing this complexity to manageable procedures requires simplification. The simplification is necessary, but organizations forget that it’s a reduction rather than the full reality.

- Scale: As organizations grow, quality systems must work across multiple sites, products, and regulatory jurisdictions. Standardization is necessary for consistency, but standardization requires abstracting away local context—precisely the domain where metis operates.

- Knowledge loss: When experienced operators leave, their tacit knowledge goes with them. Organizations try to capture this knowledge in ever-more-detailed procedures, but metis cannot be fully proceduralized. The detailed procedures give the illusion of captured knowledge while the actual knowledge has vanished.

- Management distance: Quality executives are increasingly distant from manufacturing operations. They manage through metrics, dashboards, and reports rather than direct observation. These tools require legibility—quantitative measures, standardized reports, formatted data. The gap between management’s understanding and operational reality grows.

- Inspection trauma: After regulatory inspections that identify deficiencies, organizations often respond by adding more procedures, more documentation, more oversight. The response to bureaucratic dysfunction is more bureaucracy.

Each of these pressures is individually rational. Taken together, they create what the conditions for failure: administrative ordering of complex systems, confidence in formal procedures and documentation, authority willing to enforce compliance, and increasingly, a weakened operational environment that can’t effectively resist.

What we get is the Kafkaesque quality system: elaborate, well-documented, apparently flawless, generating enormous amounts of evidence that it’s functioning properly, and potentially failing to ensure the quality it was designed to ensure.

The Consequences: When Bureaucracy Defeats Quality

The most insidious aspect of bureaucratic quality systems is that they can fail quietly. Unlike catastrophic contamination events or major product recalls, bureaucratic dysfunction produces gradual degradation that may go unnoticed because all the quality metrics say everything is fine.

Investigation without learning: Investigations that focus on completing investigation procedures rather than understanding causal mechanisms don’t generate knowledge that prevents recurrence. Organizations keep investigating the same types of problems, implementing CAPAs that check compliance boxes without addressing underlying issues, and declaring investigations “closed” when the paperwork is complete.

Research on incident investigation culture reveals what investigators call “new blame”—a dysfunction where investigators avoid examining human factors for fear of seeming accusatory, instead quickly attributing problems to “unclear procedures” or “inadequate training” without probing what actually happened. This appears to be blame-free but actually prevents learning by refusing to engage with the complexity of how humans interact with systems.

Analytical unreliability: Methods that “passed validation” may be silently failing under routine conditions, generating subtly inaccurate results that don’t trigger obvious failures but gradually degrade understanding of product quality. Nobody knows because Stage 3 verification isn’t rigorous enough to detect drift.

Operator disengagement: When operators know that the formal procedures don’t match operational reality, when they’re required to document work-as-imagined while performing work-as-done, when they see problems but reporting them triggers bureaucratic responses that don’t fix anything, they disengage. They stop reporting. They develop workarounds. They focus on satisfying the visible compliance requirements rather than ensuring genuine quality.

This is exactly what Merton predicted: bureaucratic structures that punish initiative and reward procedural compliance create officials who follow rules rather than thinking about purpose.

Resource misallocation: Organizations spend enormous resources on compliance activities that satisfy audit requirements without enhancing quality. Documentation of training that doesn’t transfer knowledge. CAPA systems that process hundreds of actions of marginal effectiveness. Validation studies that prove compliance with validation requirements without establishing genuine fitness for purpose.

Structural secrecy: Critical information that front-line operators possess about equipment quirks, material variability, and process issues doesn’t flow to quality management because bureaucratic boundaries prevent information transfer. Management makes decisions based on formal reports that reflect work-as-imagined while work-as-done remains invisible.

Loss of resilience: Organizations that depend on rigid procedures and standardized responses become brittle. When unexpected situations arise—novel contamination sources, unusual material properties, equipment failures that don’t fit prescribed categories—the organization can’t adapt because it has systematically eliminated the metis that enables adaptive response.

This last point deserves emphasis. Quality systems should make organizations more resilient—better able to maintain quality despite disturbances and variability. But bureaucratic quality systems can do the opposite. By requiring that everything be prescribed in advance, they eliminate the adaptive capacity that enables resilience.

The Alternative: High Reliability Organizations

So how do we escape the bureaucratic trap? The answer emerges from studying what researchers Karl Weick and Kathleen Sutcliffe call “High Reliability Organizations”—organizations that operate in complex, hazardous environments yet maintain exceptional safety records.

Nuclear aircraft carriers. Air traffic control systems. Wildland firefighting teams. These organizations can’t afford the luxury of bureaucratic dysfunction because failure means catastrophic consequences. Yet they operate in environments at least as complex as pharmaceutical manufacturing.

Weick and Sutcliffe identified five principles that characterize HROs:

Preoccupation with failure: HROs treat any anomaly as a potential symptom of deeper problems. They don’t wait for catastrophic failures. They investigate near-misses rigorously. They encourage reporting of even minor issues.

This is the opposite of compliance-focused quality systems that measure success by absence of major deviations and treat minor issues as acceptable noise.

Reluctance to simplify: HROs resist the temptation to reduce complex situations to simple categories. They maintain multiple interpretations of what’s happening rather than prematurely converging on a single explanation.

This challenges the bureaucratic need for legibility. It’s harder to manage systems that resist simple categorization. But it’s more effective than managing simplified representations that don’t reflect reality.

Sensitivity to operations: HROs maintain ongoing awareness of what’s happening at the sharp end where work is actually done. Leaders stay connected to operational reality rather than managing through dashboards and metrics.

This requires bridging the gap between work-as-imagined and work-as-done. It requires seeing metis rather than trying to eliminate it.

Commitment to resilience: HROs invest in adaptive capacity—the ability to respond effectively when unexpected situations arise. They practice scenario-based training. They maintain reserves of expertise. They design systems that can accommodate surprises.

This is different from bureaucratic systems that try to prevent all surprises through comprehensive procedures.

Deference to expertise: In HROs, authority migrates to whoever has relevant expertise regardless of hierarchical rank. During anomalous situations, the person with the best understanding of what’s happening makes decisions, even if that’s a junior operator rather than a senior manager.

Weick describes this as valuing “greasy hands knowledge”—the practical, experiential understanding of people directly involved in operations. This is metis by another name.

These principles directly challenge bureaucratic pathologies. Where bureaucracies focus on following established procedures, HROs focus on constant vigilance for signs that procedures aren’t working. Where bureaucracies demand hierarchical approval, HROs defer to frontline expertise. Where bureaucracies simplify for legibility, HROs maintain complexity.

Can pharmaceutical quality systems adopt HRO principles? Not easily, because the regulatory environment demands legibility and auditability. But neither can pharmaceutical quality systems afford continued bureaucratic dysfunction as complexity increases and the gap between work-as-imagined and work-as-done widens.

Building Falsifiable Quality Systems

Throughout this blog I’ve advocated for what I call falsifiable quality systems—systems designed to make testable predictions that could be proven wrong through empirical observation.

Traditional quality systems make unfalsifiable claims: “This method was validated according to ICH Q2 requirements.” “Procedures are followed.” “CAPA prevents recurrence.” These are statements about activities that occurred in the past, not predictions about future performance.

Falsifiable quality systems make explicit predictions: “This analytical method will generate reportable results within ±5% of true value under normal operating conditions.” “When operated within the defined control strategy, this process will consistently produce product meeting specifications.” “The corrective action implemented will reduce this deviation type by at least 50% over the next six months”.

These predictions can be tested. If ongoing data shows the method isn’t achieving ±5% accuracy, the prediction is falsified—the method isn’t performing as validation claimed. If deviations haven’t decreased after CAPA implementation, the prediction is falsified—the corrective action didn’t work.

Falsifiable systems create accountability for effectiveness rather than compliance. They force honest engagement with whether quality systems are actually ensuring quality.

This connects directly to HRO principles. Preoccupation with failure means treating falsification seriously—when predictions fail, investigating why. Reluctance to simplify means acknowledging the complexity that makes some predictions uncertain. Sensitivity to operations means using operational data to test predictions continuously. Commitment to resilience means building systems that can recognize and respond when predictions fail.

It also requires what researchers call “just culture”—systems that distinguish between honest errors, at-risk behaviors, and reckless violations. Bureaucratic blame cultures punish all failures, driving problems underground. “No-blame” cultures avoid examining human factors, preventing learning. Just cultures examine what happened honestly, including human decisions and actions, while focusing on system improvement rather than individual punishment.

In just culture, when a prediction is falsified—when a validated method fails, when CAPA doesn’t prevent recurrence, when operators can’t follow procedures—the response isn’t to blame individuals or to paper over the gap with more documentation. The response is to examine why the prediction was wrong and redesign the system to make it correct.

This requires the intellectual honesty to acknowledge when quality systems aren’t working. It requires willingness to look at work-as-done rather than only work-as-imagined. It requires recognizing operator metis as legitimate knowledge rather than deviation from procedures. It requires valuing learning over legibility.

Practical Steps: Escaping the Castle

How do pharmaceutical quality organizations actually implement these principles? How do we escape Kafka’s Castle once we’ve built it?

I won’t pretend this is easy. The pressures toward bureaucratization are real and powerful. Regulatory requirements demand legibility. Corporate management requires standardization. Inspection findings trigger defensive responses. The path of least resistance is always more procedures, more documentation, more oversight.

But some concrete steps can bend the trajectory away from bureaucratic dysfunction toward genuine effectiveness:

Make quality systems falsifiable: For every major quality commitment—validated analytical methods, qualified processes, implemented CAPAs—articulate explicit, testable predictions about future performance. Then systematically test those predictions through ongoing monitoring. When predictions fail, investigate why and redesign systems rather than rationalizing the failure away.

Close the WAI/WAD gap: Create safe mechanisms for understanding work-as-done. Don’t punish operators for revealing that procedures don’t match reality. Instead, use this information to improve procedures or acknowledge that some adaptation is necessary and train operators in effective adaptation rather than pretending perfect procedural compliance is possible.

Value metis: Recognize that operator expertise, analytical judgment, and troubleshooting capability are not obstacles to standardization but essential elements of quality systems. Document not just procedures but decision rules for when to adapt. Create mechanisms for transferring tacit knowledge. Include experienced operators in investigation and CAPA design.

Practice just culture: Distinguish between system-induced errors, at-risk behaviors under production pressure, and genuinely reckless violations. Focus investigations on understanding causal factors rather than assigning blame or avoiding blame. Hold people accountable for reporting problems and learning from them, not for making the inevitable errors that complex systems generate.

Implement genuine Stage 3 verification: Treat validation as predicting ongoing performance rather than certifying past performance. Monitor analytical methods, processes, and quality system elements for signs that their performance is drifting from predictions. Detect and address degradation early rather than waiting for catastrophic failure.

Bridge bureaucratic boundaries: Create information flows that cross organizational boundaries so that what operators know reaches quality management, what quality management knows reaches site leadership, and what site leadership knows shapes corporate quality strategy. This requires fighting against structural secrecy, perhaps through regular gemba walks, operator inclusion in quality councils, and bottom-up reporting mechanisms that protect operators who surface uncomfortable truths.

Test CAPA effectiveness honestly: Don’t just verify that corrective actions were implemented. Test whether they solved the problem. If a deviation was caused by inadequate operator capability, test whether capability improved. If it was caused by equipment limitation, test whether the limitation was eliminated. If the problem hasn’t recurred but you haven’t tested whether your corrective action was responsible, you don’t know if the CAPA worked—you know you got lucky.

Question metrics that measure activity rather than outcomes: Training completion rates don’t tell you whether people learned anything. Deviation closure timeliness doesn’t tell you whether investigations found root causes. CAPA implementation rates don’t tell you whether CAPAs were effective. Replace these with metrics that test quality system predictions: analytical result accuracy, process capability indices, deviation recurrence rates after CAPA, investigation quality assessed by independent review.

Embrace productive failure: When quality system elements fail—when validated methods prove unreliable, when procedures can’t be followed, when CAPAs don’t prevent recurrence—treat these as opportunities to improve systems rather than problems to be concealed or rationalized. HRO preoccupation with failure means seeing small failures as gifts that reveal system weaknesses before they cause catastrophic problems.

Continuous improvement, genuinely practiced: Implement PDCA (Plan-Do-Check-Act) or PDSA (Plan-Do-Study-Act) cycles not as compliance requirements but as systematic methods for testing changes before full implementation. Use small-scale experiments to determine whether proposed improvements actually improve rather than deploying changes enterprise-wide based on assumption.

Reduce the burden of irrelevant documentation: Much compliance documentation serves no quality purpose—it exists to satisfy audit requirements or regulatory expectations that may themselves be bureaucratic artifacts. Distinguish between documentation that genuinely supports quality (specifications, test results, deviation investigations that find root causes) and documentation that exists to demonstrate compliance (training attendance rosters for content people already know, CAPA effectiveness checks that verify nothing). Fight to eliminate the latter, or at least prevent it from crowding out the former.

The Politics of De-Bureaucratization

Here’s the uncomfortable truth: escaping the Kafkaesque quality system requires political will at the highest levels of organizations.

Quality professionals can implement some improvements within their spheres of influence—better investigation practices, more rigorous CAPA effectiveness checks, enhanced Stage 3 verification. But truly escaping the bureaucratic trap requires challenging structures that powerful constituencies benefit from.

Regulatory authorities benefit from legibility—it makes inspection and oversight possible. Corporate management benefits from standardization and quantitative metrics—they enable governance at scale. Quality bureaucracies themselves benefit from complexity and documentation—they justify resources and headcount.

Operators and production management often bear the costs of bureaucratization—additional documentation burden, inability to adapt to reality, blame when gaps between procedures and practice are revealed. But they’re typically the least powerful constituencies in pharmaceutical organizations.

Changing this dynamic requires quality leaders who understand that their role is ensuring genuine quality rather than managing compliance theater. It requires site leaders who recognize that bureaucratic dysfunction threatens product quality even when all audit checkboxes are green. It requires regulatory relationships mature enough to discuss work-as-done openly rather than pretending work-as-imagined is reality.

Scott argues that successful resistance to high-modernist schemes depends on civil society’s capacity to push back. In pharmaceutical organizations, this means empowering operational voices—the people with metis, with greasy-hands knowledge, with direct experience of the gap between procedures and reality. It means creating forums where they can speak without fear of retaliation. It means quality leaders who listen to operational expertise even when it reveals uncomfortable truths about quality system dysfunction.

This is threatening to bureaucratic structures precisely because it challenges their premise—that quality can be ensured through comprehensive documented procedures enforced by hierarchical oversight. If we acknowledge that operator metis is essential, that adaptation is necessary, that work-as-done will never perfectly match work-as-imagined, we’re admitting that the Castle isn’t really flawless.

But the Castle never was flawless. Kafka knew that. The servant destroying paperwork because he couldn’t figure out the recipient wasn’t an aberration—it was a glimpse of reality. The question is whether we continue pretending the bureaucracy works perfectly while it fails quietly, or whether we build quality systems honest enough to acknowledge their limitations and resilient enough to function despite them.

The Quality System We Need

Pharmaceutical quality systems exist in genuine tension. They must be rigorous enough to prevent failures that harm patients. They must be documented well enough to satisfy regulatory scrutiny. They must be standardized enough to work across global operations. These are not trivial requirements, and they cannot be dismissed as mere bureaucratic impositions.

But they must also be realistic enough to accommodate the complexity of manufacturing, flexible enough to incorporate operator metis, honest enough to acknowledge the gap between procedures and practice, and resilient enough to detect and correct performance drift before catastrophic failures occur.

We will not achieve this by adding more procedures, more documentation, more oversight. We’ve been trying that approach for decades, and the result is the bureaucratic trap we’re in. Every new procedure adds another layer to the Castle, another barrier between quality management and operational reality, another opportunity for the gap between work-as-imagined and work-as-done to widen.

Instead, we need quality systems designed around falsifiable predictions tested through ongoing verification. Systems that value learning over legibility. Systems that bridge bureaucratic boundaries to incorporate greasy-hands knowledge. Systems that distinguish between productive compliance and compliance theater. Systems that acknowledge complexity rather than reducing it to manageable simplifications that don’t reflect reality.

We need, in short, to stop building the Castle and start building systems for humans doing real work under real conditions.

Kafka never finished The Castle. The manuscript breaks off mid-sentence. Whether K. ever reaches the Castle, whether the officials ever explain themselves, whether the flawless bureaucracy ever acknowledges its contradictions—we’ll never know.

But pharmaceutical quality professionals don’t have the luxury of leaving the story unfinished. We’re living in it. Every day we choose whether to add another procedure to the Castle or to build something different. Every deviation investigation either perpetuates compliance theater or pursues genuine learning. Every CAPA either checks boxes or solves problems. Every validation either creates falsifiable predictions or generates documentation that satisfies audits without ensuring quality.

The bureaucratic trap is powerful precisely because each individual choice seems reasonable. Each procedure addresses a real gap. Each documentation requirement responds to an audit finding. Each oversight layer prevents a potential problem. And gradually, imperceptibly, we build a system that looks comprehensive and rigorous and “flawless” but may or may not be ensuring the quality it exists to ensure.

Escaping the trap requires intellectual honesty about whether our quality systems are working. It requires organizational courage to acknowledge gaps between procedures and practice. It requires regulatory maturity to discuss work-as-done rather than pretending work-as-imagined is reality. It requires quality leadership that values effectiveness over auditability.

Most of all, it requires remembering why we built quality systems in the first place: not to satisfy inspections, not to generate documentation, not to create employment for quality professionals, but to ensure that medicines reaching patients are safe, effective, and consistently manufactured to specification.

That goal is not served by Kafkaesque bureaucracy. It’s not served by the Castle, with its mysterious officials and contradictory explanations and flawless procedures that somehow involve destroying paperwork when nobody knows what to do with it.

It’s served by systems designed for humans, systems that acknowledge complexity, systems that incorporate the metis of people who actually do the work, systems that make falsifiable predictions and honestly evaluate whether those predictions hold.

It’s served by escaping the bureaucratic trap.

The question is whether pharmaceutical quality leadership has the courage to leave the Castle.