When I teach an introductory risk management class, I usually use an icebreaker of “What is the riskiest activity you can think of doing. Inevitably you will get some version of skydiving, swimming with sharks, jumping off bridges. This activity is great because it starts all conversations around likelihood and severity. At heart, the question brings out the concept of risk important activities and the nature of controls.

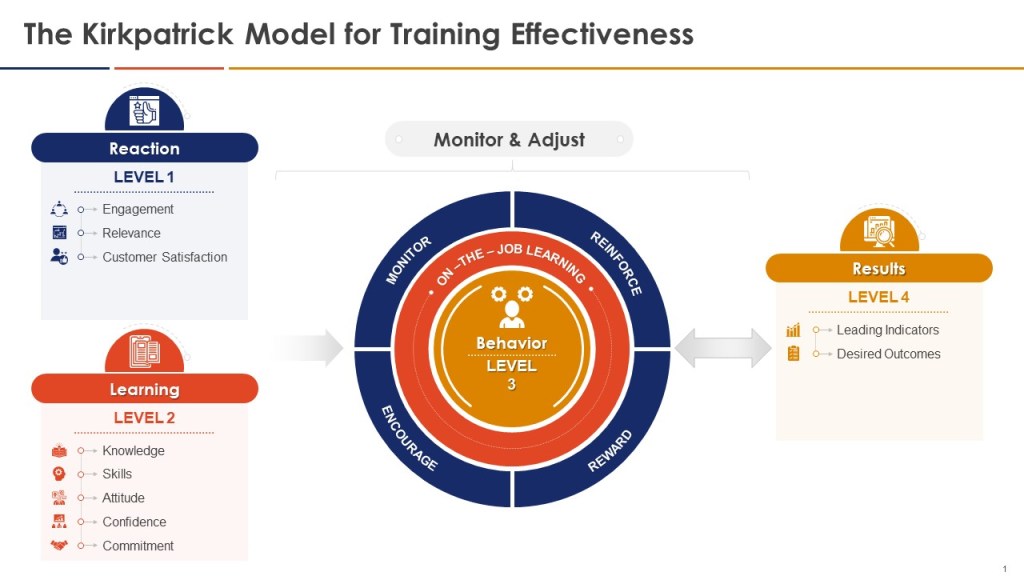

The things people think of, such as skydiving, are great examples of activities that are surrounded by activities that control risk. The very activity is based on accepting reducing risk as low as possible and then proceeding in the safest possible pathway. These risk important activities are the mechanism just before a critical step that:

- Ensure the appropriate transfer of information and skill

- Ensure the appropriate number of actions to reduce risk

- Influence the presence or effectiveness of barriers

- Influence the ability to maintain positive control of the moderation of hazards

Risk important activities is a concept important to safety-thought and are at the center of a lot of human error reduction tools and practices. Risk important activities are all about thinking through the right set of controls, building them into the procedure, and successfully executing them before reaching the critical step of no return. Checklists are a great example of this mindset at work, but there are a ton of ways of doing them.

In the hospital they use a great thought process, “Five rights of Safe Medication Practices” that are: 1) right patient, 2) right drug, 3) right dose, 4) right route, and 5) right time. Next time you are getting medication in the doctor’s office or hospital evaluate just what your caregiver is doing and how it fits into that process. Those are examples of risk important activities.

Assessing controls during risk assessment

Risk is affected by the overall effectiveness of any controls that are in place.

The key aspects of controls are:

- the mechanism by which the controls are intended to modify risk

- whether the controls are in place, are capable of operating as intended, and are achieving the expected results

- whether there are shortcomings in the design of controls or the way they are applied

- whether there are gaps in controls

- whether controls function independently, or if they need to function collectively to be effective

- whether there are factors, conditions, vulnerabilities or circumstances that can reduce or eliminate control effectiveness including common cause failures

- whether controls themselves introduce additional risks.

A risk can have more than one control and controls can affect more than one risk.

We always want to distinguish between controls that change likelihood, consequences or both, and controls that change how the burden of risk is shared between stakeholders

Any assumptions made during risk analysis about the actual effect and reliability of controls should be validated where possible, with a particular emphasis on individual or combinations of controls that are assumed to have a substantial modifying effect. This should take into account information gained through routine monitoring and review of controls.

Risk Important Activities, Critical Steps and Process

Critical steps are the way we meet our critical-to-quality requirements. The activities that ensure our product/service meets the needs of the organization.

These critical steps are the points of no-return, the point where the work-product is transformed into something else. Risk important activities are what we do to remove the danger of executing that critical step.

Beyond that critical step, you have rejection or rework. When I am cooking there is a lot of prep work which can be a mixture of critical steps, from which there is no return. I break the egg wrong and get eggshells in my batter, there is a degree of rework necessary. This is true for all our processes.

The risk-based approach to the process is to understand the critical steps and mitigate controls.

We are thinking through the following:

- Critical Step: The action that triggers irreversibility. Think in terms of critical-to-quality attributes.

- Input: What came before in the process

- Output: The desired result (positive) or the possible difficulty (negative)

- Preconditions: Technical conditions that must exist before the critical step

- Resources: What is needed for the critical step to be completed

- Local factors: Things that could influence the critical step. When human beings are involved, this is usually what can influence the performer’s thinking and actions before and during the critical step

- Defenses: Controls, barriers and safeguards

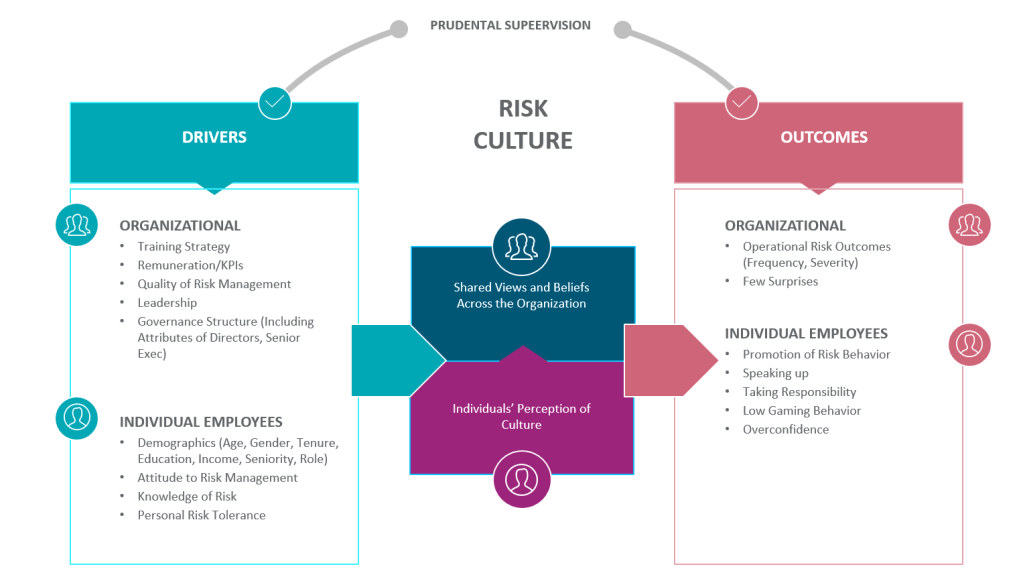

Risk Management Mindset

Good risk management requires a mindset that includes the following attributes:

- Expect to be surprised: Our processes are usually underspecified and there is a lot of hidden knowledge. Risk management serves to interrogate the unknowns

- Possess a chronic sense of unease: There is no such thing as perfect processes, procedures, training, design, planning. Past performance is not a guarantee of future success.

- Bend, not break: Everything is dynamic, especially risk. Quality comes from adaptability.

- Learn: Learn from what goes well, from mistakes, have a learning culture

- Embrace humility: No one knows everything, bring those in who know what you do not.

- Acknowledge differences between work-as-imagined and work-as-done: Work to reduce the differences.

- Value collaboration: Diversity of input

- Drive out subjectivity: Understand how opinions are formed and decisions are made.

- Systems Thinking: Performance emerges from complex, interconnected and interdependent systems and their components

The Role of Monitoring

One cannot control risk, or even successfully identify it unless a system is able flexibly to monitor both its own performance (what happens inside the system’s boundary) and what happens in the environment (outside the system’s boundary). Monitoring improves the ability to cope with possible risks

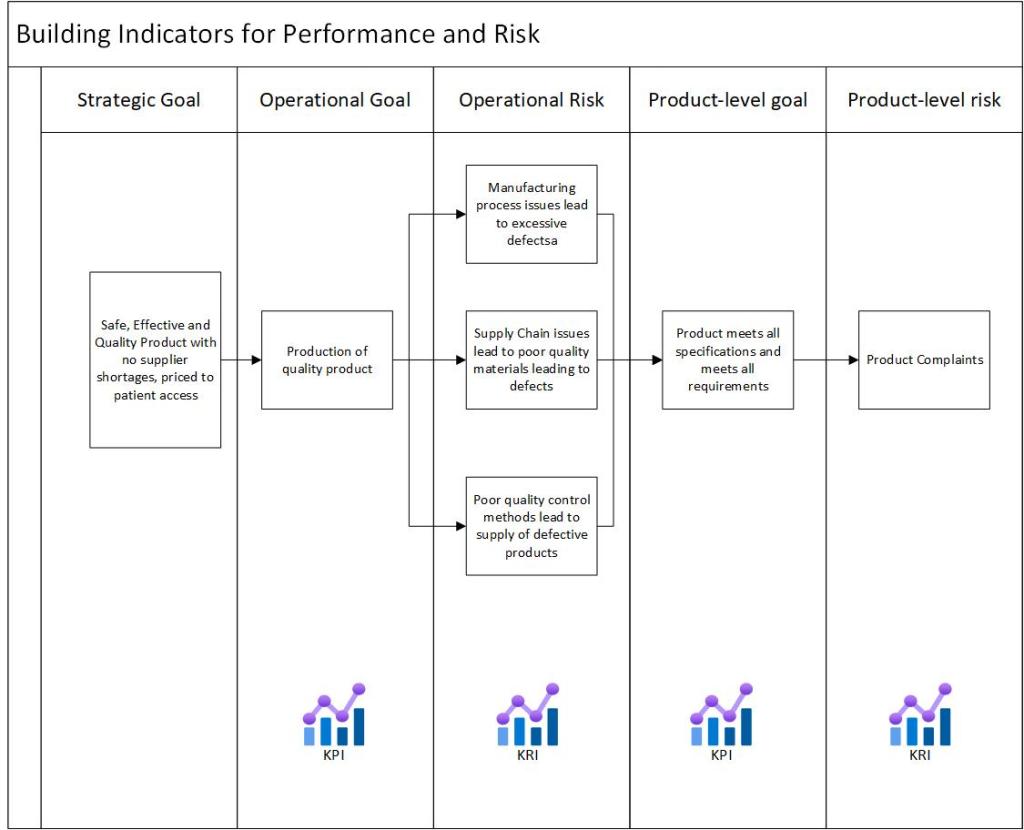

When performing the risk assessment, challenge existing monitoring and ensure that the right indicators are in place. But remember, monitoring itself is a low-effectivity control.

Ensure that there are leading indicators, which can be used as valid precursors for changes and events that are about to happen.

For each monitoring control, as yourself the following:

| Indicator | How have the indicators been defined? (By analysis, by tradition, by industry consensus, by the regulator, by international standards, etc.) |

| Relevance | When was the list created? How often is it revised? On which basis is it revised? Who is responsible for maintaining the list? |

| Type | How many of the indicators are of the ‘leading,’ type and how many are of the lagging? Do indicators refer to single or aggregated measurements? |

| Validity | How is the validity of an indicator established (regardless of whether it is leading or lagging)? Do indicators refer to an articulated process model, or just to ‘common sense’? |

| Delay | For lagging indicators, how long is the typical lag? Is it acceptable? |

| Measurement type | What is the nature of the measurements? Qualitative or quantitative? (If quantitative, what kind of scaling is used?) |

| Measurement frequency | How often are the measurements made? (Continuously, regularly, every now and then?) |

| Analysis | What is the delay between measurement and analysis/interpretation? How many of the measurements are directly meaningful and how many require analysis of some kind? How are the results communicated and used? |

| Stability | Are the measured effects transient or permanent? |

| Organization Support | Is there a regular inspection scheme or -schedule? Is it properly resourced? Where does this measurement fit into the management review? |

Key risk indicators come into play here.

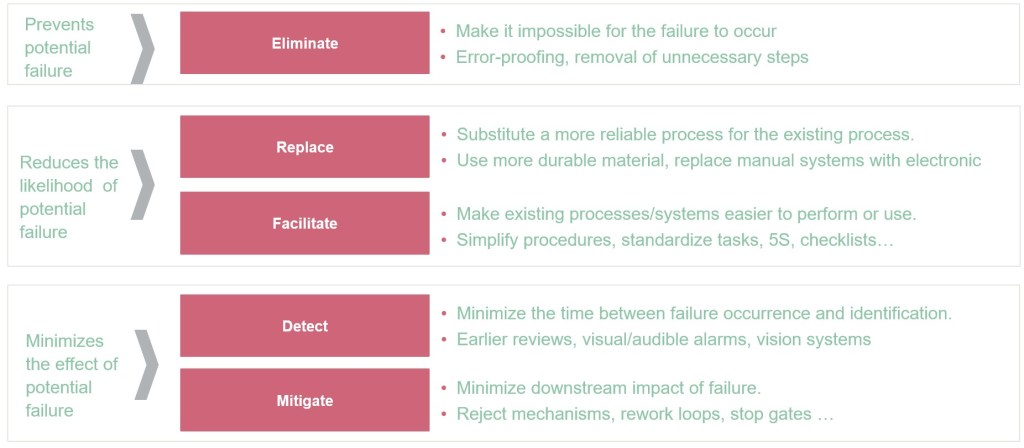

Hierarchy of Controls

Not every control is the same. This principle applies to both current control and planning future controls.